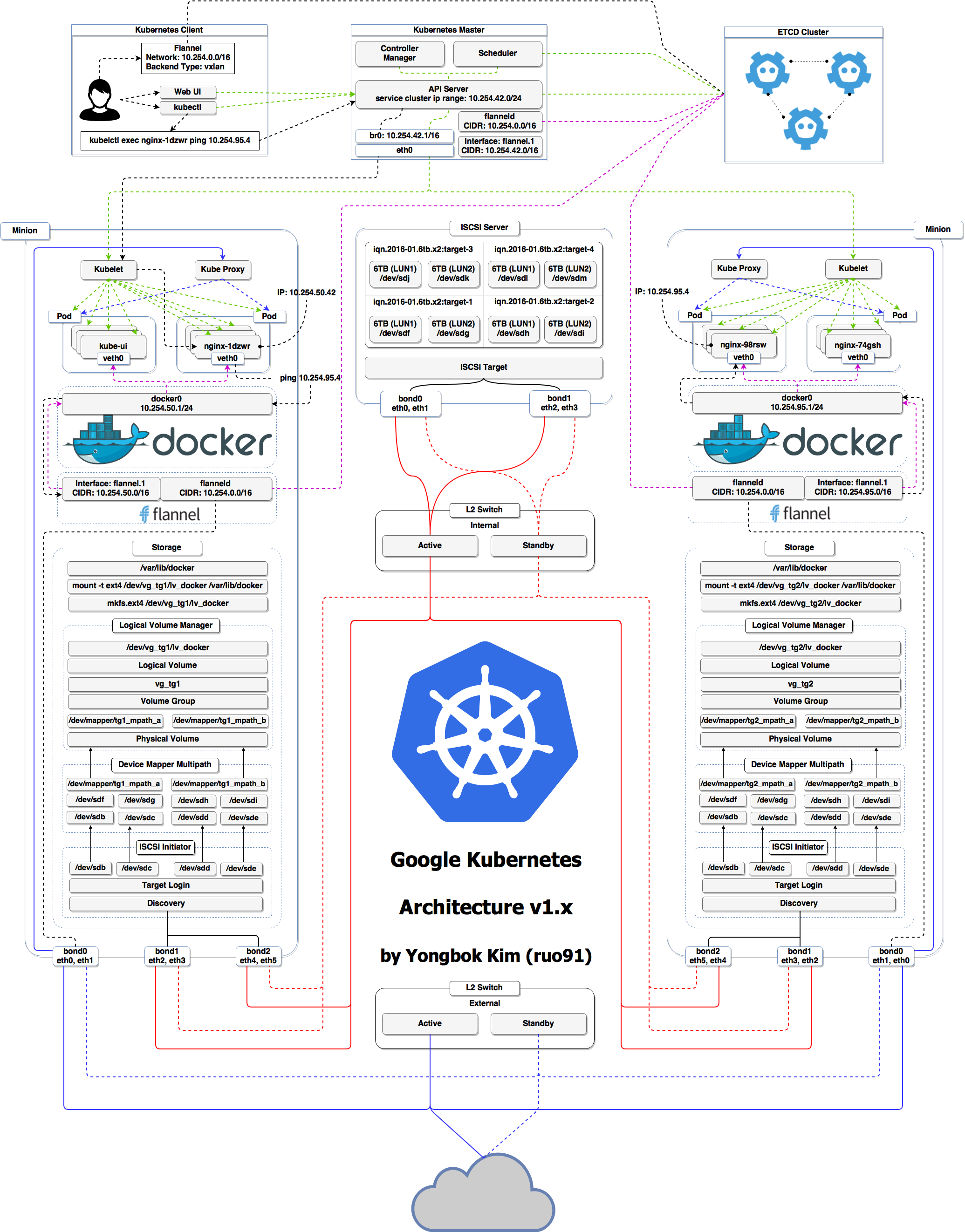

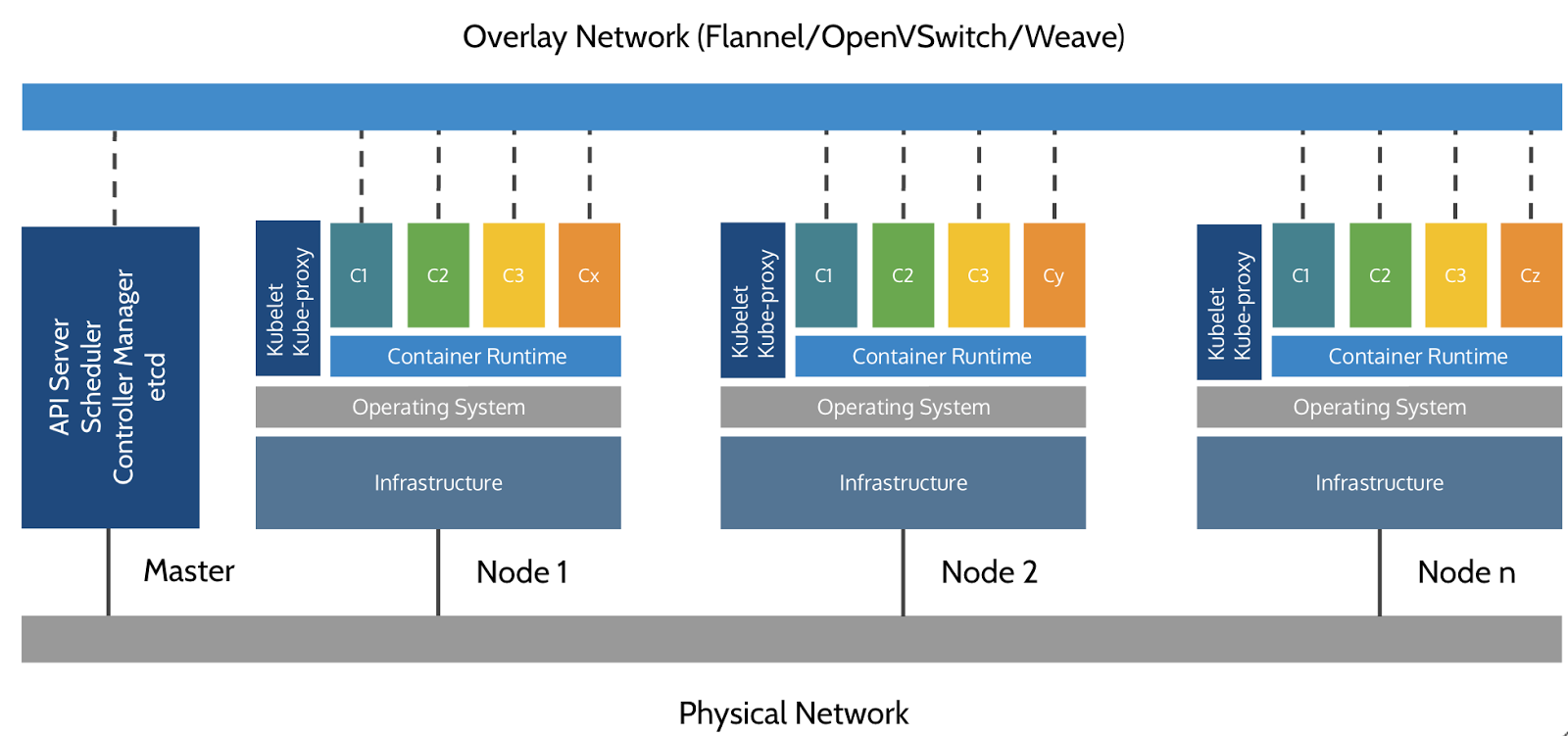

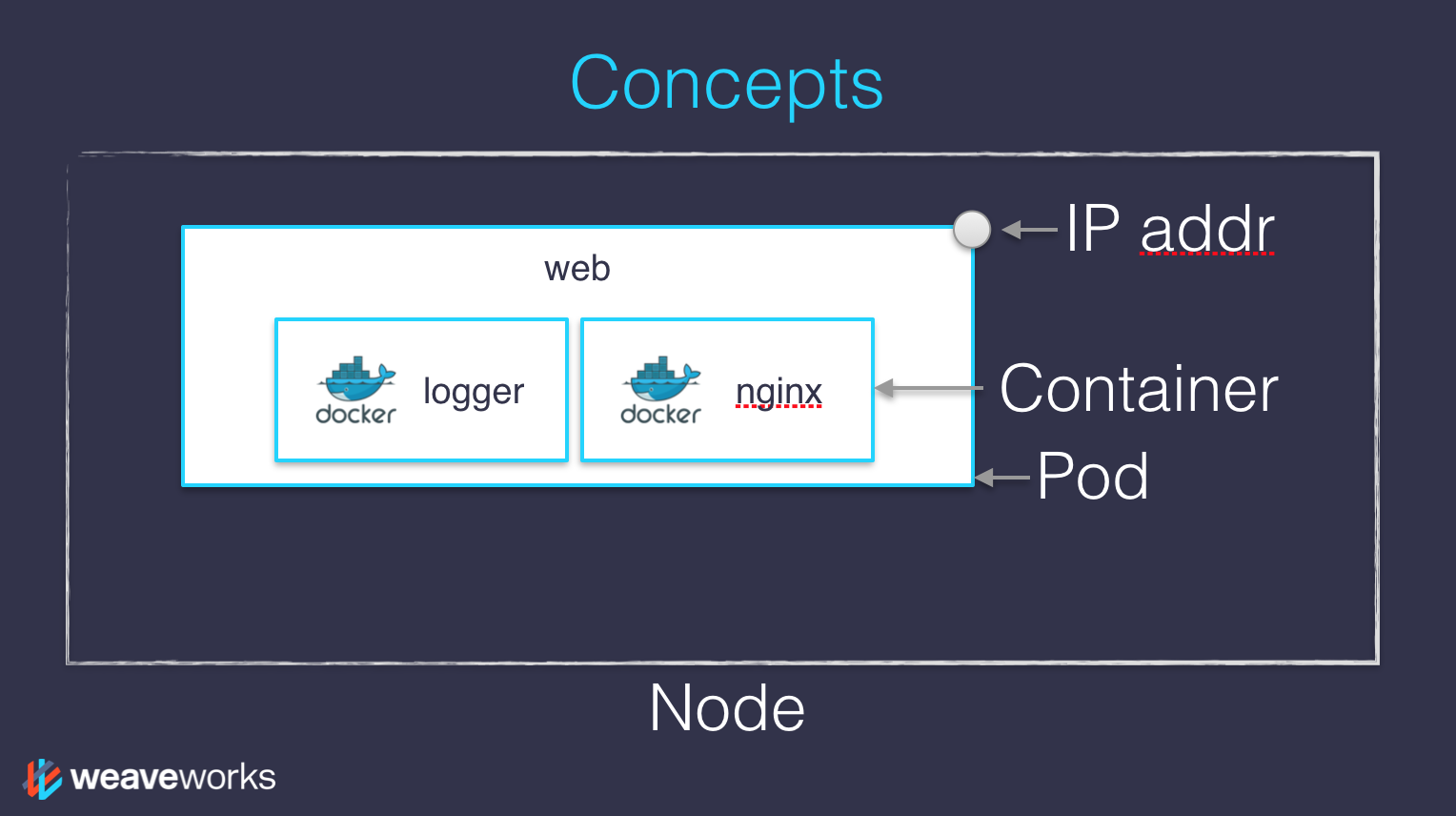

class: title, self-paced Kubernetes 101<br/> .nav[*Self-paced version*] .debug[ ``` ``` These slides have been built from commit: 64eaa7e [shared/title.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/title.md)] --- class: title, in-person Kubernetes 101<br/> WiFi: OReilly18<br> Password: velocity <br> <br> .footnote[ **Be kind to the WiFi!**<br/> <!-- *Use the 5G network.* --> *Don't use your hotspot.*<br/> *Don't stream videos or download big files during the workshop[.](https://www.youtube.com/watch?v=h16zyxiwDLY)*<br/> *Thank you!* **Slides: http://container.training/** ] .debug[[shared/title.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/title.md)] --- ## Intros - Hello! We are: - .emoji[✨] Bridget Kromhout ([@bridgetkromhout](https://twitter.com/bridgetkromhout)) - .emoji[💁🏻♀️] Karen Chu ([@karenhchu](https://twitter.com/karenhchu)) - .emoji[🌟] Ralph Squillace ([@ralph_squillace](https://twitter.com/ralph_squillace)) - The workshop will run from 9:00am - 12:30pm - There will be a break from 10:30am - 11:00am - Feel free to interrupt for questions at any time - *Especially when you see full screen container pictures!* .debug[[logistics-bridget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/logistics-bridget.md)] --- ## Say hi! - We encourage networking at [#velocityconf](https://twitter.com/hashtag/velocityconf?f=tweets&vertical=default&src=hash) - Take a minute to introduce yourself to your neighbors - Tell them where you're from (where you're based out of & what org you work at) - Share what you're hoping to learn in this session! .emoji[✨] .debug[[logistics-bridget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/logistics-bridget.md)] --- ## A brief introduction - This was initially written by [Jérôme Petazzoni](https://twitter.com/jpetazzo) to support in-person, instructor-led workshops and tutorials - Credit is also due to [multiple contributors](https://github.com/jpetazzo/container.training/graphs/contributors) — thank you! - You can also follow along on your own, at your own pace - We included as much information as possible in these slides - We recommend having a mentor to help you ... - ... Or be comfortable spending some time reading the Kubernetes [documentation](https://kubernetes.io/docs/) ... - ... And looking for answers on [StackOverflow](http://stackoverflow.com/questions/tagged/kubernetes) and other outlets .debug[[k8s/intro.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/intro.md)] --- class: self-paced ## Hands on, you shall practice - Nobody ever became a Jedi by spending their lives reading Wookiepedia - Likewise, it will take more than merely *reading* these slides to make you an expert - These slides include *tons* of exercises and examples - They assume that you have access to a Kubernetes cluster - If you are attending a workshop or tutorial: <br/>you will be given specific instructions to access your cluster - If you are doing this on your own: <br/>the first chapter will give you various options to get your own cluster .debug[[k8s/intro.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/intro.md)] --- ## About these slides - All the content is available in a public GitHub repository: https://github.com/jpetazzo/container.training - You can get updated "builds" of the slides there: http://container.training/ <!-- .exercise[ ```open https://github.com/jpetazzo/container.training``` ```open http://container.training/``` ] --> -- - Typos? Mistakes? Questions? Feel free to hover over the bottom of the slide ... .footnote[.emoji[👇] Try it! The source file will be shown and you can view it on GitHub and fork and edit it.] <!-- .exercise[ ```open https://github.com/jpetazzo/container.training/tree/master/slides/common/about-slides.md``` ] --> .debug[[shared/about-slides.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/about-slides.md)] --- class: extra-details ## Extra details - This slide has a little magnifying glass in the top left corner - This magnifying glass indicates slides that provide extra details - Feel free to skip them if: - you are in a hurry - you are new to this and want to avoid cognitive overload - you want only the most essential information - You can review these slides another time if you want, they'll be waiting for you ☺ .debug[[shared/about-slides.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/about-slides.md)] --- name: toc-chapter-1 ## Chapter 1 - [Our sample application](#toc-our-sample-application) - [Kubernetes concepts](#toc-kubernetes-concepts) - [Declarative vs imperative](#toc-declarative-vs-imperative) - [Kubernetes network model](#toc-kubernetes-network-model) - [First contact with `kubectl`](#toc-first-contact-with-kubectl) - [Setting up Kubernetes](#toc-setting-up-kubernetes) .debug[(auto-generated TOC)] --- name: toc-chapter-2 ## Chapter 2 - [Running our first containers on Kubernetes](#toc-running-our-first-containers-on-kubernetes) - [Exposing containers](#toc-exposing-containers) - [Deploying a self-hosted registry](#toc-deploying-a-self-hosted-registry) - [Exposing services internally](#toc-exposing-services-internally) - [Exposing services for external access](#toc-exposing-services-for-external-access) - [Accessing the API with `kubectl proxy`](#toc-accessing-the-api-with-kubectl-proxy) .debug[(auto-generated TOC)] --- name: toc-chapter-3 ## Chapter 3 - [The Kubernetes dashboard](#toc-the-kubernetes-dashboard) - [Security implications of `kubectl apply`](#toc-security-implications-of-kubectl-apply) - [Scaling a deployment](#toc-scaling-a-deployment) - [Daemon sets](#toc-daemon-sets) - [Updating a service through labels and selectors](#toc-updating-a-service-through-labels-and-selectors) - [Rolling updates](#toc-rolling-updates) .debug[(auto-generated TOC)] --- name: toc-chapter-4 ## Chapter 4 - [Accessing logs from the CLI](#toc-accessing-logs-from-the-cli) - [Managing stacks with Helm](#toc-managing-stacks-with-helm) - [Namespaces](#toc-namespaces) - [Network policies](#toc-network-policies) - [Links and resources](#toc-links-and-resources) - [Thank you!](#toc-thank-you) .debug[(auto-generated TOC)] .debug[[shared/toc.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/toc.md)] --- ## Hands-on - All hands-on sections are clearly identified, like the gray rectangle below .exercise[ - This is the stuff you're supposed to do! - Go to http://container.training/ to view these slides ] - Each person gets a private cluster of cloud VMs (not shared with anybody else) - All you need is a computer (or even a phone or tablet!), with: - an internet connection - a web browser - an SSH client .debug[[shared/prereqs.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/prereqs.md)] --- class: in-person ## Connecting to our lab environment .exercise[ - Log into the first VM (`node1`) with your SSH client - Check that you can SSH (without password) to `node2`: ```bash ssh node2 ``` - Type `exit` or `^D` to come back to `node1` ] If anything goes wrong — ask for help! .debug[[shared/prereqs.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/prereqs.md)] --- ## Versions installed - Kubernetes 1.12.0 - Docker Engine 18.06.1-ce - Docker Compose 1.21.1 .exercise[ - Check all installed versions: ```bash kubectl version docker version docker-compose -v ``` ] .debug[[k8s/versions-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/versions-k8s.md)] --- class: extra-details ## Kubernetes and Docker compatibility - Kubernetes 1.12.x only validates Docker Engine versions [1.11.2 to 1.13.1 and 17.03.x](https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG-1.12.md#external-dependencies) -- class: extra-details - Are we living dangerously? -- class: extra-details - "Validates" = continuous integration builds - The Docker API is versioned, and offers strong backward-compatibility (If a client uses e.g. API v1.25, the Docker Engine will keep behaving the same way) .debug[[k8s/versions-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/versions-k8s.md)] --- class: pic .interstitial[] --- name: toc-our-sample-application class: title Our sample application .nav[ [Previous section](#toc-) | [Back to table of contents](#toc-chapter-1) | [Next section](#toc-kubernetes-concepts) ] .debug[(automatically generated title slide)] --- # Our sample application - We will clone the GitHub repository onto our `node1` - The repository also contains scripts and tools that we will use through the workshop .exercise[ <!-- ```bash cd ~ if [ -d container.training ]; then mv container.training container.training.$RANDOM fi ``` --> - Clone the repository on `node1`: ```bash git clone https://github.com/jpetazzo/container.training ``` ] (You can also fork the repository on GitHub and clone your fork if you prefer that.) .debug[[shared/sampleapp.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/sampleapp.md)] --- ## Downloading and running the application Let's start this before we look around, as downloading will take a little time... .exercise[ - Go to the `dockercoins` directory, in the cloned repo: ```bash cd ~/container.training/dockercoins ``` - Use Compose to build and run all containers: ```bash docker-compose up ``` <!-- ```longwait units of work done``` --> ] Compose tells Docker to build all container images (pulling the corresponding base images), then starts all containers, and displays aggregated logs. .debug[[shared/sampleapp.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/sampleapp.md)] --- ## More detail on our sample application - Visit the GitHub repository with all the materials of this workshop: <br/>https://github.com/jpetazzo/container.training - The application is in the [dockercoins]( https://github.com/jpetazzo/container.training/tree/master/dockercoins) subdirectory - Let's look at the general layout of the source code: there is a Compose file [docker-compose.yml]( https://github.com/jpetazzo/container.training/blob/master/dockercoins/docker-compose.yml) ... ... and 4 other services, each in its own directory: - `rng` = web service generating random bytes - `hasher` = web service computing hash of POSTed data - `worker` = background process using `rng` and `hasher` - `webui` = web interface to watch progress .debug[[shared/sampleapp.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/sampleapp.md)] --- ## What's this application? -- - It is a DockerCoin miner! .emoji[💰🐳📦🚢] -- - No, you can't buy coffee with DockerCoins -- - How DockerCoins works: - `worker` asks to `rng` to generate a few random bytes - `worker` feeds these bytes into `hasher` - and repeat forever! - every second, `worker` updates `redis` to indicate how many loops were done - `webui` queries `redis`, and computes and exposes "hashing speed" in your browser .debug[[shared/sampleapp.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/sampleapp.md)] --- ## Our application at work - On the left-hand side, the "rainbow strip" shows the container names - On the right-hand side, we see the output of our containers - We can see the `worker` service making requests to `rng` and `hasher` - For `rng` and `hasher`, we see HTTP access logs .debug[[shared/sampleapp.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/sampleapp.md)] --- ## Connecting to the web UI - "Logs are exciting and fun!" (No-one, ever) - The `webui` container exposes a web dashboard; let's view it .exercise[ - With a web browser, connect to `node1` on port 8000 - Remember: the `nodeX` aliases are valid only on the nodes themselves - In your browser, you need to enter the IP address of your node <!-- ```open http://node1:8000``` --> ] A drawing area should show up, and after a few seconds, a blue graph will appear. .debug[[shared/sampleapp.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/sampleapp.md)] --- class: self-paced, extra-details ## If the graph doesn't load If you just see a `Page not found` error, it might be because your Docker Engine is running on a different machine. This can be the case if: - you are using the Docker Toolbox - you are using a VM (local or remote) created with Docker Machine - you are controlling a remote Docker Engine When you run DockerCoins in development mode, the web UI static files are mapped to the container using a volume. Alas, volumes can only work on a local environment, or when using Docker4Mac or Docker4Windows. How to fix this? Stop the app with `^C`, edit `dockercoins.yml`, comment out the `volumes` section, and try again. .debug[[shared/sampleapp.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/sampleapp.md)] --- ## Stopping the application - If we interrupt Compose (with `^C`), it will politely ask the Docker Engine to stop the app - The Docker Engine will send a `TERM` signal to the containers - If the containers do not exit in a timely manner, the Engine sends a `KILL` signal .exercise[ - Stop the application by hitting `^C` <!-- ```keys ^C``` --> ] -- Some containers exit immediately, others take longer. The containers that do not handle `SIGTERM` end up being killed after a 10s timeout. If we are very impatient, we can hit `^C` a second time! .debug[[shared/sampleapp.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/sampleapp.md)] --- ## Clean up - Before moving on, let's remove those containers .exercise[ - Tell Compose to remove everything: ```bash docker-compose down ``` ] .debug[[shared/composedown.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/composedown.md)] --- class: pic .interstitial[] --- name: toc-kubernetes-concepts class: title Kubernetes concepts .nav[ [Previous section](#toc-our-sample-application) | [Back to table of contents](#toc-chapter-1) | [Next section](#toc-declarative-vs-imperative) ] .debug[(automatically generated title slide)] --- # Kubernetes concepts - Kubernetes is a container management system - It runs and manages containerized applications on a cluster -- - What does that really mean? .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- ## Basic things we can ask Kubernetes to do -- - Start 5 containers using image `atseashop/api:v1.3` -- - Place an internal load balancer in front of these containers -- - Start 10 containers using image `atseashop/webfront:v1.3` -- - Place a public load balancer in front of these containers -- - It's Black Friday (or Christmas), traffic spikes, grow our cluster and add containers -- - New release! Replace my containers with the new image `atseashop/webfront:v1.4` -- - Keep processing requests during the upgrade; update my containers one at a time .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- ## Other things that Kubernetes can do for us - Basic autoscaling - Blue/green deployment, canary deployment - Long running services, but also batch (one-off) jobs - Overcommit our cluster and *evict* low-priority jobs - Run services with *stateful* data (databases etc.) - Fine-grained access control defining *what* can be done by *whom* on *which* resources - Integrating third party services (*service catalog*) - Automating complex tasks (*operators*) .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- ## Kubernetes architecture .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- class: pic  .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- ## Kubernetes architecture - Ha ha ha ha - OK, I was trying to scare you, it's much simpler than that ❤️ .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- class: pic  .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- ## Credits - The first schema is a Kubernetes cluster with storage backed by multi-path iSCSI (Courtesy of [Yongbok Kim](https://www.yongbok.net/blog/)) - The second one is a simplified representation of a Kubernetes cluster (Courtesy of [Imesh Gunaratne](https://medium.com/containermind/a-reference-architecture-for-deploying-wso2-middleware-on-kubernetes-d4dee7601e8e)) .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- ## Kubernetes architecture: the nodes - The nodes executing our containers run a collection of services: - a container Engine (typically Docker) - kubelet (the "node agent") - kube-proxy (a necessary but not sufficient network component) - Nodes were formerly called "minions" (You might see that word in older articles or documentation) .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- ## Kubernetes architecture: the control plane - The Kubernetes logic (its "brains") is a collection of services: - the API server (our point of entry to everything!) - core services like the scheduler and controller manager - `etcd` (a highly available key/value store; the "database" of Kubernetes) - Together, these services form the control plane of our cluster - The control plane is also called the "master" .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- ## Running the control plane on special nodes - It is common to reserve a dedicated node for the control plane (Except for single-node development clusters, like when using minikube) - This node is then called a "master" (Yes, this is ambiguous: is the "master" a node, or the whole control plane?) - Normal applications are restricted from running on this node (By using a mechanism called ["taints"](https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/)) - When high availability is required, each service of the control plane must be resilient - The control plane is then replicated on multiple nodes (This is sometimes called a "multi-master" setup) .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- ## Running the control plane outside containers - The services of the control plane can run in or out of containers - For instance: since `etcd` is a critical service, some people deploy it directly on a dedicated cluster (without containers) (This is illustrated on the first "super complicated" schema) - In some hosted Kubernetes offerings (e.g. AKS, GKE, EKS), the control plane is invisible (We only "see" a Kubernetes API endpoint) - In that case, there is no "master node" *For this reason, it is more accurate to say "control plane" rather than "master".* .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- ## Default container runtime - By default, Kubernetes uses the Docker Engine to run containers - We could also use `rkt` ("Rocket") from CoreOS - Or leverage other pluggable runtimes through the *Container Runtime Interface* (like CRI-O, or containerd) .footnote[More information about CRI [on the Kubernetes blog](https://kubernetes.io/blog/2016/12/container-runtime-interface-cri-in-kubernetes)] .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- ## Kubernetes resources - The Kubernetes API defines a lot of objects called *resources* - These resources are organized by type, or `Kind` (in the API) - A few common resource types are: - node (a machine — physical or virtual — in our cluster) - pod (group of containers running together on a node) - IP addresses are associated with *pods*, not with individual containers - service (stable network endpoint to connect to one or multiple containers) - namespace (more-or-less isolated group of things) - secret (bundle of sensitive data to be passed to a container) - And much more! - We can see the full list by running `kubectl api-resources` (In Kubernetes 1.10 and prior, the command to list API resources was `kubectl get`) .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- class: pic  .debug[[k8s/concepts-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/concepts-k8s.md)] --- class: pic .interstitial[] --- name: toc-declarative-vs-imperative class: title Declarative vs imperative .nav[ [Previous section](#toc-kubernetes-concepts) | [Back to table of contents](#toc-chapter-1) | [Next section](#toc-kubernetes-network-model) ] .debug[(automatically generated title slide)] --- # Declarative vs imperative - Our container orchestrator puts a very strong emphasis on being *declarative* - Declarative: *I would like a cup of tea.* - Imperative: *Boil some water. Pour it in a teapot. Add tea leaves. Steep for a while. Serve in a cup.* -- - Declarative seems simpler at first ... -- - ... As long as you know how to brew tea .debug[[shared/declarative.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/declarative.md)] --- ## Declarative vs imperative - What declarative would really be: *I want a cup of tea, obtained by pouring an infusion¹ of tea leaves in a cup.* -- *¹An infusion is obtained by letting the object steep a few minutes in hot² water.* -- *²Hot liquid is obtained by pouring it in an appropriate container³ and setting it on a stove.* -- *³Ah, finally, containers! Something we know about. Let's get to work, shall we?* -- .footnote[Did you know there was an [ISO standard](https://en.wikipedia.org/wiki/ISO_3103) specifying how to brew tea?] .debug[[shared/declarative.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/declarative.md)] --- ## Declarative vs imperative - Imperative systems: - simpler - if a task is interrupted, we have to restart from scratch - Declarative systems: - if a task is interrupted (or if we show up to the party half-way through), we can figure out what's missing and do only what's necessary - we need to be able to *observe* the system - ... and compute a "diff" between *what we have* and *what we want* .debug[[shared/declarative.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/declarative.md)] --- ## Declarative vs imperative in Kubernetes - Virtually everything we create in Kubernetes is created from a *spec* - Watch for the `spec` fields in the YAML files later! - The *spec* describes *how we want the thing to be* - Kubernetes will *reconcile* the current state with the spec <br/>(technically, this is done by a number of *controllers*) - When we want to change some resource, we update the *spec* - Kubernetes will then *converge* that resource .debug[[k8s/declarative.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/declarative.md)] --- class: pic .interstitial[] --- name: toc-kubernetes-network-model class: title Kubernetes network model .nav[ [Previous section](#toc-declarative-vs-imperative) | [Back to table of contents](#toc-chapter-1) | [Next section](#toc-first-contact-with-kubectl) ] .debug[(automatically generated title slide)] --- # Kubernetes network model - TL,DR: *Our cluster (nodes and pods) is one big flat IP network.* -- - In detail: - all nodes must be able to reach each other, without NAT - all pods must be able to reach each other, without NAT - pods and nodes must be able to reach each other, without NAT - each pod is aware of its IP address (no NAT) - Kubernetes doesn't mandate any particular implementation .debug[[k8s/kubenet.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubenet.md)] --- ## Kubernetes network model: the good - Everything can reach everything - No address translation - No port translation - No new protocol - Pods cannot move from a node to another and keep their IP address - IP addresses don't have to be "portable" from a node to another (We can use e.g. a subnet per node and use a simple routed topology) - The specification is simple enough to allow many various implementations .debug[[k8s/kubenet.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubenet.md)] --- ## Kubernetes network model: the less good - Everything can reach everything - if you want security, you need to add network policies - the network implementation that you use needs to support them - There are literally dozens of implementations out there (15 are listed in the Kubernetes documentation) - Pods have level 3 (IP) connectivity, but *services* are level 4 (Services map to a single UDP or TCP port; no port ranges or arbitrary IP packets) - `kube-proxy` is on the data path when connecting to a pod or container, <br/>and it's not particularly fast (relies on userland proxying or iptables) .debug[[k8s/kubenet.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubenet.md)] --- ## Kubernetes network model: in practice - The nodes that we are using have been set up to use [Weave](https://github.com/weaveworks/weave) - We don't endorse Weave in a particular way, it just Works For Us - Don't worry about the warning about `kube-proxy` performance - Unless you: - routinely saturate 10G network interfaces - count packet rates in millions per second - run high-traffic VOIP or gaming platforms - do weird things that involve millions of simultaneous connections <br/>(in which case you're already familiar with kernel tuning) - If necessary, there are alternatives to `kube-proxy`; e.g. [`kube-router`](https://www.kube-router.io) .debug[[k8s/kubenet.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubenet.md)] --- ## The Container Network Interface (CNI) - The CNI has a well-defined [specification](https://github.com/containernetworking/cni/blob/master/SPEC.md#network-configuration) for network plugins - When a pod is created, Kubernetes delegates the network setup to CNI plugins - Typically, a CNI plugin will: - allocate an IP address (by calling an IPAM plugin) - add a network interface into the pod's network namespace - configure the interface as well as required routes etc. - Using multiple plugins can be done with "meta-plugins" like CNI-Genie or Multus - Not all CNI plugins are equal (e.g. they don't all implement network policies, which are required to isolate pods) .debug[[k8s/kubenet.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubenet.md)] --- class: pic .interstitial[] --- name: toc-first-contact-with-kubectl class: title First contact with `kubectl` .nav[ [Previous section](#toc-kubernetes-network-model) | [Back to table of contents](#toc-chapter-1) | [Next section](#toc-setting-up-kubernetes) ] .debug[(automatically generated title slide)] --- # First contact with `kubectl` - `kubectl` is (almost) the only tool we'll need to talk to Kubernetes - It is a rich CLI tool around the Kubernetes API (Everything you can do with `kubectl`, you can do directly with the API) - On our machines, there is a `~/.kube/config` file with: - the Kubernetes API address - the path to our TLS certificates used to authenticate - You can also use the `--kubeconfig` flag to pass a config file - Or directly `--server`, `--user`, etc. - `kubectl` can be pronounced "Cube C T L", "Cube cuttle", "Cube cuddle"... .debug[[k8s/kubectlget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlget.md)] --- ## `kubectl get` - Let's look at our `Node` resources with `kubectl get`! .exercise[ - Look at the composition of our cluster: ```bash kubectl get node ``` - These commands are equivalent: ```bash kubectl get no kubectl get node kubectl get nodes ``` ] .debug[[k8s/kubectlget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlget.md)] --- ## Obtaining machine-readable output - `kubectl get` can output JSON, YAML, or be directly formatted .exercise[ - Give us more info about the nodes: ```bash kubectl get nodes -o wide ``` - Let's have some YAML: ```bash kubectl get no -o yaml ``` See that `kind: List` at the end? It's the type of our result! ] .debug[[k8s/kubectlget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlget.md)] --- ## (Ab)using `kubectl` and `jq` - It's super easy to build custom reports .exercise[ - Show the capacity of all our nodes as a stream of JSON objects: ```bash kubectl get nodes -o json | jq ".items[] | {name:.metadata.name} + .status.capacity" ``` ] .debug[[k8s/kubectlget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlget.md)] --- ## What's available? - `kubectl` has pretty good introspection facilities - We can list all available resource types by running `kubectl api-resources` <br/> (In Kubernetes 1.10 and prior, this command used to be `kubectl get`) - We can view details about a resource with: ```bash kubectl describe type/name kubectl describe type name ``` - We can view the definition for a resource type with: ```bash kubectl explain type ``` Each time, `type` can be singular, plural, or abbreviated type name. .debug[[k8s/kubectlget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlget.md)] --- ## Services - A *service* is a stable endpoint to connect to "something" (In the initial proposal, they were called "portals") .exercise[ - List the services on our cluster with one of these commands: ```bash kubectl get services kubectl get svc ``` ] -- There is already one service on our cluster: the Kubernetes API itself. .debug[[k8s/kubectlget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlget.md)] --- ## ClusterIP services - A `ClusterIP` service is internal, available from the cluster only - This is useful for introspection from within containers .exercise[ - Try to connect to the API: ```bash curl -k https://`10.96.0.1` ``` - `-k` is used to skip certificate verification - Make sure to replace 10.96.0.1 with the CLUSTER-IP shown by `kubectl get svc` ] -- The error that we see is expected: the Kubernetes API requires authentication. .debug[[k8s/kubectlget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlget.md)] --- ## Listing running containers - Containers are manipulated through *pods* - A pod is a group of containers: - running together (on the same node) - sharing resources (RAM, CPU; but also network, volumes) .exercise[ - List pods on our cluster: ```bash kubectl get pods ``` ] -- *These are not the pods you're looking for.* But where are they?!? .debug[[k8s/kubectlget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlget.md)] --- ## Namespaces - Namespaces allow us to segregate resources .exercise[ - List the namespaces on our cluster with one of these commands: ```bash kubectl get namespaces kubectl get namespace kubectl get ns ``` ] -- *You know what ... This `kube-system` thing looks suspicious.* .debug[[k8s/kubectlget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlget.md)] --- ## Accessing namespaces - By default, `kubectl` uses the `default` namespace - We can switch to a different namespace with the `-n` option .exercise[ - List the pods in the `kube-system` namespace: ```bash kubectl -n kube-system get pods ``` ] -- *Ding ding ding ding ding!* The `kube-system` namespace is used for the control plane. .debug[[k8s/kubectlget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlget.md)] --- ## What are all these control plane pods? - `etcd` is our etcd server - `kube-apiserver` is the API server - `kube-controller-manager` and `kube-scheduler` are other master components - `coredns` provides DNS-based service discovery ([replacing kube-dns as of 1.11](https://kubernetes.io/blog/2018/07/10/coredns-ga-for-kubernetes-cluster-dns/)) - `kube-proxy` is the (per-node) component managing port mappings and such - `weave` is the (per-node) component managing the network overlay - the `READY` column indicates the number of containers in each pod - the pods with a name ending with `-node1` are the master components <br/> (they have been specifically "pinned" to the master node) .debug[[k8s/kubectlget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlget.md)] --- ## What about `kube-public`? .exercise[ - List the pods in the `kube-public` namespace: ```bash kubectl -n kube-public get pods ``` ] -- - Maybe it doesn't have pods, but what secrets is `kube-public` keeping? -- .exercise[ - List the secrets in the `kube-public` namespace: ```bash kubectl -n kube-public get secrets ``` ] -- - `kube-public` is created by kubeadm & [used for security bootstrapping](https://kubernetes.io/blog/2017/01/stronger-foundation-for-creating-and-managing-kubernetes-clusters) .debug[[k8s/kubectlget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlget.md)] --- class: pic .interstitial[] --- name: toc-setting-up-kubernetes class: title Setting up Kubernetes .nav[ [Previous section](#toc-first-contact-with-kubectl) | [Back to table of contents](#toc-chapter-1) | [Next section](#toc-running-our-first-containers-on-kubernetes) ] .debug[(automatically generated title slide)] --- # Setting up Kubernetes - How did we set up these Kubernetes clusters that we're using? -- - We used `kubeadm` on freshly installed VM instances running Ubuntu 16.04 LTS 1. Install Docker 2. Install Kubernetes packages 3. Run `kubeadm init` on the first node (it deploys the control plane on that node) 4. Set up Weave (the overlay network) <br/> (that step is just one `kubectl apply` command; discussed later) 5. Run `kubeadm join` on the other nodes (with the token produced by `kubeadm init`) 6. Copy the configuration file generated by `kubeadm init` - Check the [prepare VMs README](https://github.com/jpetazzo/container.training/blob/master/prepare-vms/README.md) for more details .debug[[k8s/setup-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/setup-k8s.md)] --- ## `kubeadm` drawbacks - Doesn't set up Docker or any other container engine - Doesn't set up the overlay network - Doesn't set up multi-master (no high availability) -- (At least ... not yet! Though it's [experimental in 1.12](https://kubernetes.io/docs/setup/independent/high-availability/).) .debug[[k8s/setup-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/setup-k8s.md)] --- ## Other deployment options - If you are on Azure: [AKS](https://azure.microsoft.com/services/kubernetes-service/) - If you are on Google Cloud: [GKE](https://cloud.google.com/kubernetes-engine/) - If you are on AWS: [EKS](https://aws.amazon.com/eks/) or [kops](https://github.com/kubernetes/kops) - On a local machine: [minikube](https://kubernetes.io/docs/setup/minikube/), [kubespawn](https://github.com/kinvolk/kube-spawn), [Docker4Mac](https://docs.docker.com/docker-for-mac/kubernetes/) - If you want something customizable: [kubicorn](https://github.com/kubicorn/kubicorn) Probably the closest to a multi-cloud/hybrid solution so far, but in development .debug[[k8s/setup-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/setup-k8s.md)] --- ## Even more deployment options - If you like Ansible: [kubespray](https://github.com/kubernetes-incubator/kubespray) - If you like Terraform: [typhoon](https://github.com/poseidon/typhoon) - If you like Terraform and Puppet: [tarmak](https://github.com/jetstack/tarmak) - You can also learn how to install every component manually, with the excellent tutorial [Kubernetes The Hard Way](https://github.com/kelseyhightower/kubernetes-the-hard-way) *Kubernetes The Hard Way is optimized for learning, which means taking the long route to ensure you understand each task required to bootstrap a Kubernetes cluster.* - There are also many commercial options available! - For a longer list, check the Kubernetes documentation: <br/> it has a great guide to [pick the right solution](https://kubernetes.io/docs/setup/pick-right-solution/) to set up Kubernetes. .debug[[k8s/setup-k8s.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/setup-k8s.md)] --- class: pic .interstitial[] --- name: toc-running-our-first-containers-on-kubernetes class: title Running our first containers on Kubernetes .nav[ [Previous section](#toc-setting-up-kubernetes) | [Back to table of contents](#toc-chapter-2) | [Next section](#toc-exposing-containers) ] .debug[(automatically generated title slide)] --- # Running our first containers on Kubernetes - First things first: we cannot run a container -- - We are going to run a pod, and in that pod there will be a single container -- - In that container in the pod, we are going to run a simple `ping` command - Then we are going to start additional copies of the pod .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- ## Starting a simple pod with `kubectl run` - We need to specify at least a *name* and the image we want to use .exercise[ - Let's ping `1.1.1.1`, Cloudflare's [public DNS resolver](https://blog.cloudflare.com/announcing-1111/): ```bash kubectl run pingpong --image alpine ping 1.1.1.1 ``` <!-- ```hide kubectl wait deploy/pingpong --for condition=available``` --> ] -- (Starting with Kubernetes 1.12, we get a message telling us that `kubectl run` is deprecated. Let's ignore it for now.) .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- ## Behind the scenes of `kubectl run` - Let's look at the resources that were created by `kubectl run` .exercise[ - List most resource types: ```bash kubectl get all ``` ] -- We should see the following things: - `deployment.apps/pingpong` (the *deployment* that we just created) - `replicaset.apps/pingpong-xxxxxxxxxx` (a *replica set* created by the deployment) - `pod/pingpong-xxxxxxxxxx-yyyyy` (a *pod* created by the replica set) Note: as of 1.10.1, resource types are displayed in more detail. .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- ## What are these different things? - A *deployment* is a high-level construct - allows scaling, rolling updates, rollbacks - multiple deployments can be used together to implement a [canary deployment](https://kubernetes.io/docs/concepts/cluster-administration/manage-deployment/#canary-deployments) - delegates pods management to *replica sets* - A *replica set* is a low-level construct - makes sure that a given number of identical pods are running - allows scaling - rarely used directly - A *replication controller* is the (deprecated) predecessor of a replica set .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- ## Our `pingpong` deployment - `kubectl run` created a *deployment*, `deployment.apps/pingpong` ``` NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE deployment.apps/pingpong 1 1 1 1 10m ``` - That deployment created a *replica set*, `replicaset.apps/pingpong-xxxxxxxxxx` ``` NAME DESIRED CURRENT READY AGE replicaset.apps/pingpong-7c8bbcd9bc 1 1 1 10m ``` - That replica set created a *pod*, `pod/pingpong-xxxxxxxxxx-yyyyy` ``` NAME READY STATUS RESTARTS AGE pod/pingpong-7c8bbcd9bc-6c9qz 1/1 Running 0 10m ``` - We'll see later how these folks play together for: - scaling, high availability, rolling updates .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- ## Viewing container output - Let's use the `kubectl logs` command - We will pass either a *pod name*, or a *type/name* (E.g. if we specify a deployment or replica set, it will get the first pod in it) - Unless specified otherwise, it will only show logs of the first container in the pod (Good thing there's only one in ours!) .exercise[ - View the result of our `ping` command: ```bash kubectl logs deploy/pingpong ``` ] .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- ## Streaming logs in real time - Just like `docker logs`, `kubectl logs` supports convenient options: - `-f`/`--follow` to stream logs in real time (à la `tail -f`) - `--tail` to indicate how many lines you want to see (from the end) - `--since` to get logs only after a given timestamp .exercise[ - View the latest logs of our `ping` command: ```bash kubectl logs deploy/pingpong --tail 1 --follow ``` <!-- ```wait seq=3``` ```keys ^C``` --> ] .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- ## Scaling our application - We can create additional copies of our container (I mean, our pod) with `kubectl scale` .exercise[ - Scale our `pingpong` deployment: ```bash kubectl scale deploy/pingpong --replicas 8 ``` ] Note: what if we tried to scale `replicaset.apps/pingpong-xxxxxxxxxx`? We could! But the *deployment* would notice it right away, and scale back to the initial level. .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- ## Resilience - The *deployment* `pingpong` watches its *replica set* - The *replica set* ensures that the right number of *pods* are running - What happens if pods disappear? .exercise[ - In a separate window, list pods, and keep watching them: ```bash kubectl get pods -w ``` <!-- ```wait Running``` ```keys ^C``` ```hide kubectl wait deploy pingpong --for condition=available``` ```keys kubectl delete pod ping``` ```copypaste pong-..........-.....``` --> - Destroy a pod: ``` kubectl delete pod pingpong-xxxxxxxxxx-yyyyy ``` ] .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- ## What if we wanted something different? - What if we wanted to start a "one-shot" container that *doesn't* get restarted? - We could use `kubectl run --restart=OnFailure` or `kubectl run --restart=Never` - These commands would create *jobs* or *pods* instead of *deployments* - Under the hood, `kubectl run` invokes "generators" to create resource descriptions - We could also write these resource descriptions ourselves (typically in YAML), <br/>and create them on the cluster with `kubectl apply -f` (discussed later) - With `kubectl run --schedule=...`, we can also create *cronjobs* .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- ## What about that deprecation warning? - As we can see from the previous slide, `kubectl run` can do many things - The exact type of resource created is not obvious - To make things more explicit, it is better to use `kubectl create`: - `kubectl create deployment` to create a deployment - `kubectl create job` to create a job - Eventually, `kubectl run` will be used only to start one-shot pods (see https://github.com/kubernetes/kubernetes/pull/68132) .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- ## Various ways of creating resources - `kubectl run` - easy way to get started - versatile - `kubectl create <resource>` - explicit, but lacks some features - can't create a CronJob - can't pass command-line arguments to deployments - `kubectl create -f foo.yaml` or `kubectl apply -f foo.yaml` - all features are available - requires writing YAML .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- ## Viewing logs of multiple pods - When we specify a deployment name, only one single pod's logs are shown - We can view the logs of multiple pods by specifying a *selector* - A selector is a logic expression using *labels* - Conveniently, when you `kubectl run somename`, the associated objects have a `run=somename` label .exercise[ - View the last line of log from all pods with the `run=pingpong` label: ```bash kubectl logs -l run=pingpong --tail 1 ``` ] Unfortunately, `--follow` cannot (yet) be used to stream the logs from multiple containers. .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- ## Aren't we flooding 1.1.1.1? - If you're wondering this, good question! - Don't worry, though: *APNIC's research group held the IP addresses 1.1.1.1 and 1.0.0.1. While the addresses were valid, so many people had entered them into various random systems that they were continuously overwhelmed by a flood of garbage traffic. APNIC wanted to study this garbage traffic but any time they'd tried to announce the IPs, the flood would overwhelm any conventional network.* (Source: https://blog.cloudflare.com/announcing-1111/) - It's very unlikely that our concerted pings manage to produce even a modest blip at Cloudflare's NOC! .debug[[k8s/kubectlrun.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlrun.md)] --- class: pic .interstitial[] --- name: toc-exposing-containers class: title Exposing containers .nav[ [Previous section](#toc-running-our-first-containers-on-kubernetes) | [Back to table of contents](#toc-chapter-2) | [Next section](#toc-deploying-a-self-hosted-registry) ] .debug[(automatically generated title slide)] --- # Exposing containers - `kubectl expose` creates a *service* for existing pods - A *service* is a stable address for a pod (or a bunch of pods) - If we want to connect to our pod(s), we need to create a *service* - Once a service is created, CoreDNS will allow us to resolve it by name (i.e. after creating service `hello`, the name `hello` will resolve to something) - There are different types of services, detailed on the following slides: `ClusterIP`, `NodePort`, `LoadBalancer`, `ExternalName` .debug[[k8s/kubectlexpose.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlexpose.md)] --- ## Basic service types - `ClusterIP` (default type) - a virtual IP address is allocated for the service (in an internal, private range) - this IP address is reachable only from within the cluster (nodes and pods) - our code can connect to the service using the original port number - `NodePort` - a port is allocated for the service (by default, in the 30000-32768 range) - that port is made available *on all our nodes* and anybody can connect to it - our code must be changed to connect to that new port number These service types are always available. Under the hood: `kube-proxy` is using a userland proxy and a bunch of `iptables` rules. .debug[[k8s/kubectlexpose.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlexpose.md)] --- ## More service types - `LoadBalancer` - an external load balancer is allocated for the service - the load balancer is configured accordingly <br/>(e.g.: a `NodePort` service is created, and the load balancer sends traffic to that port) - available only when the underlying infrastructure provides some "load balancer as a service" <br/>(e.g. AWS, Azure, GCE, OpenStack...) - `ExternalName` - the DNS entry managed by CoreDNS will just be a `CNAME` to a provided record - no port, no IP address, no nothing else is allocated .debug[[k8s/kubectlexpose.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlexpose.md)] --- ## Running containers with open ports - Since `ping` doesn't have anything to connect to, we'll have to run something else .exercise[ - Start a bunch of HTTP servers: ```bash kubectl run httpenv --image=jpetazzo/httpenv --replicas=10 ``` - Watch them being started: ```bash kubectl get pods -w ``` <!-- ```wait httpenv-``` ```keys ^C``` --> ] The `jpetazzo/httpenv` image runs an HTTP server on port 8888. <br/> It serves its environment variables in JSON format. The `-w` option "watches" events happening on the specified resources. .debug[[k8s/kubectlexpose.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlexpose.md)] --- ## Exposing our deployment - We'll create a default `ClusterIP` service .exercise[ - Expose the HTTP port of our server: ```bash kubectl expose deploy/httpenv --port 8888 ``` - Look up which IP address was allocated: ```bash kubectl get svc ``` ] .debug[[k8s/kubectlexpose.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlexpose.md)] --- ## Services are layer 4 constructs - You can assign IP addresses to services, but they are still *layer 4* (i.e. a service is not an IP address; it's an IP address + protocol + port) - This is caused by the current implementation of `kube-proxy` (it relies on mechanisms that don't support layer 3) - As a result: you *have to* indicate the port number for your service - Running services with arbitrary port (or port ranges) requires hacks (e.g. host networking mode) .debug[[k8s/kubectlexpose.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlexpose.md)] --- ## Testing our service - We will now send a few HTTP requests to our pods .exercise[ - Let's obtain the IP address that was allocated for our service, *programmatically:* ```bash IP=$(kubectl get svc httpenv -o go-template --template '{{ .spec.clusterIP }}') ``` <!-- ```hide kubectl wait deploy httpenv --for condition=available``` --> - Send a few requests: ```bash curl http://$IP:8888/ ``` - Too much output? Filter it with `jq`: ```bash curl -s http://$IP:8888/ | jq .HOSTNAME ``` ] -- Try it a few times! Our requests are load balanced across multiple pods. .debug[[k8s/kubectlexpose.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlexpose.md)] --- class: extra-details ## If we don't need a load balancer - Sometimes, we want to access our scaled services directly: - if we want to save a tiny little bit of latency (typically less than 1ms) - if we need to connect over arbitrary ports (instead of a few fixed ones) - if we need to communicate over another protocol than UDP or TCP - if we want to decide how to balance the requests client-side - ... - In that case, we can use a "headless service" .debug[[k8s/kubectlexpose.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlexpose.md)] --- class: extra-details ## Headless services - A headless service is obtained by setting the `clusterIP` field to `None` (Either with `--cluster-ip=None`, or by providing a custom YAML) - As a result, the service doesn't have a virtual IP address - Since there is no virtual IP address, there is no load balancer either - CoreDNS will return the pods' IP addresses as multiple `A` records - This gives us an easy way to discover all the replicas for a deployment .debug[[k8s/kubectlexpose.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlexpose.md)] --- class: extra-details ## Services and endpoints - A service has a number of "endpoints" - Each endpoint is a host + port where the service is available - The endpoints are maintained and updated automatically by Kubernetes .exercise[ - Check the endpoints that Kubernetes has associated with our `httpenv` service: ```bash kubectl describe service httpenv ``` ] In the output, there will be a line starting with `Endpoints:`. That line will list a bunch of addresses in `host:port` format. .debug[[k8s/kubectlexpose.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlexpose.md)] --- class: extra-details ## Viewing endpoint details - When we have many endpoints, our display commands truncate the list ```bash kubectl get endpoints ``` - If we want to see the full list, we can use one of the following commands: ```bash kubectl describe endpoints httpenv kubectl get endpoints httpenv -o yaml ``` - These commands will show us a list of IP addresses - These IP addresses should match the addresses of the corresponding pods: ```bash kubectl get pods -l run=httpenv -o wide ``` .debug[[k8s/kubectlexpose.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlexpose.md)] --- class: extra-details ## `endpoints` not `endpoint` - `endpoints` is the only resource that cannot be singular ```bash $ kubectl get endpoint error: the server doesn't have a resource type "endpoint" ``` - This is because the type itself is plural (unlike every other resource) - There is no `endpoint` object: `type Endpoints struct` - The type doesn't represent a single endpoint, but a list of endpoints .debug[[k8s/kubectlexpose.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlexpose.md)] --- class: title Our app on Kube .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- ## The plan - Build on our control node (`node1`) - Tag images so that they are named `$REGISTRY/servicename` - Upload them to a registry - Create deployments using the images - Expose (with a ClusterIP) the services that need to communicate - Expose (with a NodePort) the WebUI .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- ## Which registry do we want to use? - We could use the Docker Hub - Or a service offered by our cloud provider (ACR, GCR, ECR...) - Or we could just self-host that registry *We'll self-host the registry because it's the most generic solution for this workshop.* .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- ## Using the open source registry - We need to run a `registry` container - It will store images and layers to the local filesystem <br/>(but you can add a config file to use S3, Swift, etc.) - Docker *requires* TLS when communicating with the registry - unless for registries on `127.0.0.0/8` (i.e. `localhost`) - or with the Engine flag `--insecure-registry` - Our strategy: publish the registry container on a NodePort, <br/>so that it's available through `127.0.0.1:xxxxx` on each node .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- class: pic .interstitial[] --- name: toc-deploying-a-self-hosted-registry class: title Deploying a self-hosted registry .nav[ [Previous section](#toc-exposing-containers) | [Back to table of contents](#toc-chapter-2) | [Next section](#toc-exposing-services-internally) ] .debug[(automatically generated title slide)] --- # Deploying a self-hosted registry - We will deploy a registry container, and expose it with a NodePort .exercise[ - Create the registry service: ```bash kubectl run registry --image=registry ``` - Expose it on a NodePort: ```bash kubectl expose deploy/registry --port=5000 --type=NodePort ``` ] .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- ## Connecting to our registry - We need to find out which port has been allocated .exercise[ - View the service details: ```bash kubectl describe svc/registry ``` - Get the port number programmatically: ```bash NODEPORT=$(kubectl get svc/registry -o json | jq .spec.ports[0].nodePort) REGISTRY=127.0.0.1:$NODEPORT ``` ] .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- ## Testing our registry - A convenient Docker registry API route to remember is `/v2/_catalog` .exercise[ <!-- ```hide kubectl wait deploy/registry --for condition=available```--> - View the repositories currently held in our registry: ```bash curl $REGISTRY/v2/_catalog ``` ] -- We should see: ```json {"repositories":[]} ``` .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- ## Building and pushing our images - We are going to use a convenient feature of Docker Compose .exercise[ - Go to the `stacks` directory: ```bash cd ~/container.training/stacks ``` - Build and push the images: ```bash export REGISTRY export TAG=v0.1 docker-compose -f dockercoins.yml build docker-compose -f dockercoins.yml push ``` ] Let's have a look at the `dockercoins.yml` file while this is building and pushing. .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- ```yaml version: "3" services: rng: build: dockercoins/rng image: ${REGISTRY-127.0.0.1:5000}/rng:${TAG-latest} deploy: mode: global ... redis: image: redis ... worker: build: dockercoins/worker image: ${REGISTRY-127.0.0.1:5000}/worker:${TAG-latest} ... deploy: replicas: 10 ``` .warning[Just in case you were wondering ... Docker "services" are not Kubernetes "services".] .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- class: extra-details ## Avoiding the `latest` tag .warning[Make sure that you've set the `TAG` variable properly!] - If you don't, the tag will default to `latest` - The problem with `latest`: nobody knows what it points to! - the latest commit in the repo? - the latest commit in some branch? (Which one?) - the latest tag? - some random version pushed by a random team member? - If you keep pushing the `latest` tag, how do you roll back? - Image tags should be meaningful, i.e. correspond to code branches, tags, or hashes .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- ## Deploying all the things - We can now deploy our code (as well as a redis instance) .exercise[ - Deploy `redis`: ```bash kubectl run redis --image=redis ``` - Deploy everything else: ```bash for SERVICE in hasher rng webui worker; do kubectl run $SERVICE --image=$REGISTRY/$SERVICE:$TAG done ``` ] .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- ## Is this working? - After waiting for the deployment to complete, let's look at the logs! (Hint: use `kubectl get deploy -w` to watch deployment events) .exercise[ <!-- ```hide kubectl wait deploy/rng --for condition=available kubectl wait deploy/worker --for condition=available ``` --> - Look at some logs: ```bash kubectl logs deploy/rng kubectl logs deploy/worker ``` ] -- 🤔 `rng` is fine ... But not `worker`. -- 💡 Oh right! We forgot to `expose`. .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- class: pic .interstitial[] --- name: toc-exposing-services-internally class: title Exposing services internally .nav[ [Previous section](#toc-deploying-a-self-hosted-registry) | [Back to table of contents](#toc-chapter-2) | [Next section](#toc-exposing-services-for-external-access) ] .debug[(automatically generated title slide)] --- # Exposing services internally - Three deployments need to be reachable by others: `hasher`, `redis`, `rng` - `worker` doesn't need to be exposed - `webui` will be dealt with later .exercise[ - Expose each deployment, specifying the right port: ```bash kubectl expose deployment redis --port 6379 kubectl expose deployment rng --port 80 kubectl expose deployment hasher --port 80 ``` ] .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- ## Is this working yet? - The `worker` has an infinite loop, that retries 10 seconds after an error .exercise[ - Stream the worker's logs: ```bash kubectl logs deploy/worker --follow ``` (Give it about 10 seconds to recover) <!-- ```wait units of work done, updating hash counter``` ```keys ^C``` --> ] -- We should now see the `worker`, well, working happily. .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- class: pic .interstitial[] --- name: toc-exposing-services-for-external-access class: title Exposing services for external access .nav[ [Previous section](#toc-exposing-services-internally) | [Back to table of contents](#toc-chapter-2) | [Next section](#toc-accessing-the-api-with-kubectl-proxy) ] .debug[(automatically generated title slide)] --- # Exposing services for external access - Now we would like to access the Web UI - We will expose it with a `NodePort` (just like we did for the registry) .exercise[ - Create a `NodePort` service for the Web UI: ```bash kubectl expose deploy/webui --type=NodePort --port=80 ``` - Check the port that was allocated: ```bash kubectl get svc ``` ] .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- ## Accessing the web UI - We can now connect to *any node*, on the allocated node port, to view the web UI .exercise[ - Open the web UI in your browser (http://node-ip-address:3xxxx/) <!-- ```open http://node1:3xxxx/``` --> ] -- Yes, this may take a little while to update. *(Narrator: it was DNS.)* -- *Alright, we're back to where we started, when we were running on a single node!* .debug[[k8s/ourapponkube.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/ourapponkube.md)] --- class: pic .interstitial[] --- name: toc-accessing-the-api-with-kubectl-proxy class: title Accessing the API with `kubectl proxy` .nav[ [Previous section](#toc-exposing-services-for-external-access) | [Back to table of contents](#toc-chapter-2) | [Next section](#toc-the-kubernetes-dashboard) ] .debug[(automatically generated title slide)] --- # Accessing the API with `kubectl proxy` - The API requires us to authenticate.red[¹] - There are many authentication methods available, including: - TLS client certificates <br/> (that's what we've used so far) - HTTP basic password authentication <br/> (from a static file; not recommended) - various token mechanisms <br/> (detailed in the [documentation](https://kubernetes.io/docs/reference/access-authn-authz/authentication/#authentication-strategies)) .red[¹]OK, we lied. If you don't authenticate, you are considered to be user `system:anonymous`, which doesn't have any access rights by default. .debug[[k8s/kubectlproxy.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlproxy.md)] --- ## Accessing the API directly - Let's see what happens if we try to access the API directly with `curl` .exercise[ - Retrieve the ClusterIP allocated to the `kubernetes` service: ```bash kubectl get svc kubernetes ``` - Replace the IP below and try to connect with `curl`: ```bash curl -k https://`10.96.0.1`/ ``` ] The API will tell us that user `system:anonymous` cannot access this path. .debug[[k8s/kubectlproxy.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlproxy.md)] --- ## Authenticating to the API If we wanted to talk to the API, we would need to: - extract our TLS key and certificate information from `~/.kube/config` (the information is in PEM format, encoded in base64) - use that information to present our certificate when connecting (for instance, with `openssl s_client -key ... -cert ... -connect ...`) - figure out exactly which credentials to use (once we start juggling multiple clusters) - change that whole process if we're using another authentication method 🤔 There has to be a better way! .debug[[k8s/kubectlproxy.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlproxy.md)] --- ## Using `kubectl proxy` for authentication - `kubectl proxy` runs a proxy in the foreground - This proxy lets us access the Kubernetes API without authentication (`kubectl proxy` adds our credentials on the fly to the requests) - This proxy lets us access the Kubernetes API over plain HTTP - This is a great tool to learn and experiment with the Kubernetes API - ... And for serious usages as well (suitable for one-shot scripts) - For unattended use, it is better to create a [service account](https://kubernetes.io/docs/tasks/configure-pod-container/configure-service-account/) .debug[[k8s/kubectlproxy.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlproxy.md)] --- ## Trying `kubectl proxy` - Let's start `kubectl proxy` and then do a simple request with `curl`! .exercise[ - Start `kubectl proxy` in the background: ```bash kubectl proxy & ``` - Access the API's default route: ```bash curl localhost:8001 ``` <!-- ```wait /version``` ```keys ^J``` --> - Terminate the proxy: ```bash kill %1 ``` ] The output is a list of available API routes. .debug[[k8s/kubectlproxy.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlproxy.md)] --- ## `kubectl proxy` is intended for local use - By default, the proxy listens on port 8001 (But this can be changed, or we can tell `kubectl proxy` to pick a port) - By default, the proxy binds to `127.0.0.1` (Making it unreachable from other machines, for security reasons) - By default, the proxy only accepts connections from: `^localhost$,^127\.0\.0\.1$,^\[::1\]$` - This is great when running `kubectl proxy` locally - Not-so-great when you want to connect to the proxy from a remote machine .debug[[k8s/kubectlproxy.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlproxy.md)] --- ## Running `kubectl proxy` on a remote machine - If we wanted to connect to the proxy from another machine, we would need to: - bind to `INADDR_ANY` instead of `127.0.0.1` - accept connections from any address - This is achieved with: ``` kubectl proxy --port=8888 --address=0.0.0.0 --accept-hosts=.* ``` .warning[Do not do this on a real cluster: it opens full unauthenticated access!] .debug[[k8s/kubectlproxy.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlproxy.md)] --- ## Security considerations - Running `kubectl proxy` openly is a huge security risk - It is slightly better to run the proxy where you need it (and copy credentials, e.g. `~/.kube/config`, to that place) - It is even better to use a limited account with reduced permissions .debug[[k8s/kubectlproxy.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlproxy.md)] --- ## Good to know ... - `kubectl proxy` also gives access to all internal services - Specifically, services are exposed as such: ``` /api/v1/namespaces/<namespace>/services/<service>/proxy ``` - We can use `kubectl proxy` to access an internal service in a pinch (or, for non HTTP services, `kubectl port-forward`) - This is not very useful when running `kubectl` directly on the cluster (since we could connect to the services directly anyway) - But it is very powerful as soon as you run `kubectl` from a remote machine .debug[[k8s/kubectlproxy.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlproxy.md)] --- class: pic .interstitial[] --- name: toc-the-kubernetes-dashboard class: title The Kubernetes dashboard .nav[ [Previous section](#toc-accessing-the-api-with-kubectl-proxy) | [Back to table of contents](#toc-chapter-3) | [Next section](#toc-security-implications-of-kubectl-apply) ] .debug[(automatically generated title slide)] --- # The Kubernetes dashboard - Kubernetes resources can also be viewed with a web dashboard - We are going to deploy that dashboard with *three commands:* 1) actually *run* the dashboard 2) bypass SSL for the dashboard 3) bypass authentication for the dashboard -- There is an additional step to make the dashboard available from outside (we'll get to that) -- .footnote[.warning[Yes, this will open our cluster to all kinds of shenanigans. Don't do this at home.]] .debug[[k8s/dashboard.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/dashboard.md)] --- ## 1) Running the dashboard - We need to create a *deployment* and a *service* for the dashboard - But also a *secret*, a *service account*, a *role* and a *role binding* - All these things can be defined in a YAML file and created with `kubectl apply -f` .exercise[ - Create all the dashboard resources, with the following command: ```bash kubectl apply -f ~/container.training/k8s/kubernetes-dashboard.yaml ``` ] .debug[[k8s/dashboard.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/dashboard.md)] --- ## 2) Bypassing SSL for the dashboard - The Kubernetes dashboard uses HTTPS, but we don't have a certificate - Recent versions of Chrome (63 and later) and Edge will refuse to connect (You won't even get the option to ignore a security warning!) - We could (and should!) get a certificate, e.g. with [Let's Encrypt](https://letsencrypt.org/) - ... But for convenience, for this workshop, we'll forward HTTP to HTTPS .warning[Do not do this at home, or even worse, at work!] .debug[[k8s/dashboard.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/dashboard.md)] --- ## Running the SSL unwrapper - We are going to run [`socat`](http://www.dest-unreach.org/socat/doc/socat.html), telling it to accept TCP connections and relay them over SSL - Then we will expose that `socat` instance with a `NodePort` service - For convenience, these steps are neatly encapsulated into another YAML file .exercise[ - Apply the convenient YAML file, and defeat SSL protection: ```bash kubectl apply -f ~/container.training/k8s/socat.yaml ``` ] .warning[All our dashboard traffic is now clear-text, including passwords!] .debug[[k8s/dashboard.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/dashboard.md)] --- ## Connecting to the dashboard .exercise[ - Check which port the dashboard is on: ```bash kubectl -n kube-system get svc socat ``` ] You'll want the `3xxxx` port. .exercise[ - Connect to http://oneofournodes:3xxxx/ <!-- ```open http://node1:3xxxx/``` --> ] The dashboard will then ask you which authentication you want to use. .debug[[k8s/dashboard.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/dashboard.md)] --- ## Dashboard authentication - We have three authentication options at this point: - token (associated with a role that has appropriate permissions) - kubeconfig (e.g. using the `~/.kube/config` file from `node1`) - "skip" (use the dashboard "service account") - Let's use "skip": we get a bunch of warnings and don't see much .debug[[k8s/dashboard.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/dashboard.md)] --- ## 3) Bypass authentication for the dashboard - The dashboard documentation [explains how to do this](https://github.com/kubernetes/dashboard/wiki/Access-control#admin-privileges) - We just need to load another YAML file! .exercise[ - Grant admin privileges to the dashboard so we can see our resources: ```bash kubectl apply -f ~/container.training/k8s/grant-admin-to-dashboard.yaml ``` - Reload the dashboard and enjoy! ] -- .warning[By the way, we just added a backdoor to our Kubernetes cluster!] .debug[[k8s/dashboard.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/dashboard.md)] --- ## Exposing the dashboard over HTTPS - We took a shortcut by forwarding HTTP to HTTPS inside the cluster - Let's expose the dashboard over HTTPS! - The dashboard is exposed through a `ClusterIP` service (internal traffic only) - We will change that into a `NodePort` service (accepting outside traffic) .exercise[ - Edit the service: ``` kubectl edit service kubernetes-dashboard ``` ] -- `NotFound`?!? Y U NO WORK?!? .debug[[k8s/dashboard.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/dashboard.md)] --- ## Editing the `kubernetes-dashboard` service - If we look at the [YAML](https://github.com/jpetazzo/container.training/blob/master/k8s/kubernetes-dashboard.yaml) that we loaded before, we'll get a hint -- - The dashboard was created in the `kube-system` namespace -- .exercise[ - Edit the service: ```bash kubectl -n kube-system edit service kubernetes-dashboard ``` - Change type `type:` from `ClusterIP` to `NodePort`, save, and exit <!-- ```wait Please edit the object below``` ```keys /ClusterIP``` ```keys ^J``` ```keys cwNodePort``` ```keys ^[ ``` ] ```keys :wq``` ```keys ^J``` --> - Check the port that was assigned with `kubectl -n kube-system get services` - Connect to https://oneofournodes:3xxxx/ (yes, https) ] .debug[[k8s/dashboard.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/dashboard.md)] --- ## Running the Kubernetes dashboard securely - The steps that we just showed you are *for educational purposes only!* - If you do that on your production cluster, people [can and will abuse it](https://blog.redlock.io/cryptojacking-tesla) - For an in-depth discussion about securing the dashboard, <br/> check [this excellent post on Heptio's blog](https://blog.heptio.com/on-securing-the-kubernetes-dashboard-16b09b1b7aca) .debug[[k8s/dashboard.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/dashboard.md)] --- class: pic .interstitial[] --- name: toc-security-implications-of-kubectl-apply class: title Security implications of `kubectl apply` .nav[ [Previous section](#toc-the-kubernetes-dashboard) | [Back to table of contents](#toc-chapter-3) | [Next section](#toc-scaling-a-deployment) ] .debug[(automatically generated title slide)] --- # Security implications of `kubectl apply` - When we do `kubectl apply -f <URL>`, we create arbitrary resources - Resources can be evil; imagine a `deployment` that ... -- - starts bitcoin miners on the whole cluster -- - hides in a non-default namespace -- - bind-mounts our nodes' filesystem -- - inserts SSH keys in the root account (on the node) -- - encrypts our data and ransoms it -- - ☠️☠️☠️ .debug[[k8s/dashboard.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/dashboard.md)] --- ## `kubectl apply` is the new `curl | sh` - `curl | sh` is convenient - It's safe if you use HTTPS URLs from trusted sources -- - `kubectl apply -f` is convenient - It's safe if you use HTTPS URLs from trusted sources - Example: the official setup instructions for most pod networks -- - It introduces new failure modes (like if you try to apply yaml from a link that's no longer valid) .debug[[k8s/dashboard.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/dashboard.md)] --- class: pic .interstitial[] --- name: toc-scaling-a-deployment class: title Scaling a deployment .nav[ [Previous section](#toc-security-implications-of-kubectl-apply) | [Back to table of contents](#toc-chapter-3) | [Next section](#toc-daemon-sets) ] .debug[(automatically generated title slide)] --- # Scaling a deployment - We will start with an easy one: the `worker` deployment .exercise[ - Open two new terminals to check what's going on with pods and deployments: ```bash kubectl get pods -w kubectl get deployments -w ``` <!-- ```wait RESTARTS``` ```keys ^C``` ```wait AVAILABLE``` ```keys ^C``` --> - Now, create more `worker` replicas: ```bash kubectl scale deploy/worker --replicas=10 ``` ] After a few seconds, the graph in the web UI should show up. <br/> (And peak at 10 hashes/second, just like when we were running on a single one.) .debug[[k8s/kubectlscale.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/kubectlscale.md)] --- class: pic .interstitial[] --- name: toc-daemon-sets class: title Daemon sets .nav[ [Previous section](#toc-scaling-a-deployment) | [Back to table of contents](#toc-chapter-3) | [Next section](#toc-updating-a-service-through-labels-and-selectors) ] .debug[(automatically generated title slide)] --- # Daemon sets - We want to scale `rng` in a way that is different from how we scaled `worker` - We want one (and exactly one) instance of `rng` per node - What if we just scale up `deploy/rng` to the number of nodes? - nothing guarantees that the `rng` containers will be distributed evenly - if we add nodes later, they will not automatically run a copy of `rng` - if we remove (or reboot) a node, one `rng` container will restart elsewhere - Instead of a `deployment`, we will use a `daemonset` .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Daemon sets in practice - Daemon sets are great for cluster-wide, per-node processes: - `kube-proxy` - `weave` (our overlay network) - monitoring agents - hardware management tools (e.g. SCSI/FC HBA agents) - etc. - They can also be restricted to run [only on some nodes](https://kubernetes.io/docs/concepts/workloads/controllers/daemonset/#running-pods-on-only-some-nodes) .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Creating a daemon set - Unfortunately, as of Kubernetes 1.12, the CLI cannot create daemon sets -- - More precisely: it doesn't have a subcommand to create a daemon set -- - But any kind of resource can always be created by providing a YAML description: ```bash kubectl apply -f foo.yaml ``` -- - How do we create the YAML file for our daemon set? -- - option 1: [read the docs](https://kubernetes.io/docs/concepts/workloads/controllers/daemonset/#create-a-daemonset) -- - option 2: `vi` our way out of it .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Creating the YAML file for our daemon set - Let's start with the YAML file for the current `rng` resource .exercise[ - Dump the `rng` resource in YAML: ```bash kubectl get deploy/rng -o yaml --export >rng.yml ``` - Edit `rng.yml` ] Note: `--export` will remove "cluster-specific" information, i.e.: - namespace (so that the resource is not tied to a specific namespace) - status and creation timestamp (useless when creating a new resource) - resourceVersion and uid (these would cause... *interesting* problems) .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## "Casting" a resource to another - What if we just changed the `kind` field? (It can't be that easy, right?) .exercise[ - Change `kind: Deployment` to `kind: DaemonSet` <!-- ```bash vim rng.yml``` ```wait kind: Deployment``` ```keys /Deployment``` ```keys ^J``` ```keys cwDaemonSet``` ```keys ^[``` ] ```keys :wq``` ```keys ^J``` --> - Save, quit - Try to create our new resource: ``` kubectl apply -f rng.yml ``` ] -- We all knew this couldn't be that easy, right! .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Understanding the problem - The core of the error is: ``` error validating data: [ValidationError(DaemonSet.spec): unknown field "replicas" in io.k8s.api.extensions.v1beta1.DaemonSetSpec, ... ``` -- - *Obviously,* it doesn't make sense to specify a number of replicas for a daemon set -- - Workaround: fix the YAML - remove the `replicas` field - remove the `strategy` field (which defines the rollout mechanism for a deployment) - remove the `progressDeadlineSeconds` field (also used by the rollout mechanism) - remove the `status: {}` line at the end -- - Or, we could also ... .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Use the `--force`, Luke - We could also tell Kubernetes to ignore these errors and try anyway - The `--force` flag's actual name is `--validate=false` .exercise[ - Try to load our YAML file and ignore errors: ```bash kubectl apply -f rng.yml --validate=false ``` ] -- 🎩✨🐇 -- Wait ... Now, can it be *that* easy? .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Checking what we've done - Did we transform our `deployment` into a `daemonset`? .exercise[ - Look at the resources that we have now: ```bash kubectl get all ``` ] -- We have two resources called `rng`: - the *deployment* that was existing before - the *daemon set* that we just created We also have one too many pods. <br/> (The pod corresponding to the *deployment* still exists.) .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## `deploy/rng` and `ds/rng` - You can have different resource types with the same name (i.e. a *deployment* and a *daemon set* both named `rng`) - We still have the old `rng` *deployment* ``` NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE deployment.apps/rng 1 1 1 1 18m ``` - But now we have the new `rng` *daemon set* as well ``` NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE daemonset.apps/rng 2 2 2 2 2 <none> 9s ``` .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Too many pods - If we check with `kubectl get pods`, we see: - *one pod* for the deployment (named `rng-xxxxxxxxxx-yyyyy`) - *one pod per node* for the daemon set (named `rng-zzzzz`) ``` NAME READY STATUS RESTARTS AGE rng-54f57d4d49-7pt82 1/1 Running 0 11m rng-b85tm 1/1 Running 0 25s rng-hfbrr 1/1 Running 0 25s [...] ``` -- The daemon set created one pod per node, except on the master node. The master node has [taints](https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/) preventing pods from running there. (To schedule a pod on this node anyway, the pod will require appropriate [tolerations](https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/).) .footnote[(Off by one? We don't run these pods on the node hosting the control plane.)] .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## What are all these pods doing? - Let's check the logs of all these `rng` pods - All these pods have a `run=rng` label: - the first pod, because that's what `kubectl run` does - the other ones (in the daemon set), because we *copied the spec from the first one* - Therefore, we can query everybody's logs using that `run=rng` selector .exercise[ - Check the logs of all the pods having a label `run=rng`: ```bash kubectl get pods -l run=rng -o name | xargs -n 1 kubectl logs --tail 1 ``` ] -- It appears that *all the pods* are serving requests at the moment. .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## The magic of selectors - The `rng` *service* is load balancing requests to a set of pods - This set of pods is defined as "pods having the label `run=rng`" .exercise[ - Check the *selector* in the `rng` service definition: ```bash kubectl describe service rng ``` ] When we created additional pods with this label, they were automatically detected by `svc/rng` and added as *endpoints* to the associated load balancer. .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Removing the first pod from the load balancer - What would happen if we removed that pod, with `kubectl delete pod ...`? -- The `replicaset` would re-create it immediately. -- - What would happen if we removed the `run=rng` label from that pod? -- The `replicaset` would re-create it immediately. -- ... Because what matters to the `replicaset` is the number of pods *matching that selector.* -- - But but but ... Don't we have more than one pod with `run=rng` now? -- The answer lies in the exact selector used by the `replicaset` ... .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Deep dive into selectors - Let's look at the selectors for the `rng` *deployment* and the associated *replica set* .exercise[ - Show detailed information about the `rng` deployment: ```bash kubectl describe deploy rng ``` - Show detailed information about the `rng` replica: <br/>(The second command doesn't require you to get the exact name of the replica set) ```bash kubectl describe rs rng-yyyyyyyy kubectl describe rs -l run=rng ``` ] -- The replica set selector also has a `pod-template-hash`, unlike the pods in our daemon set. .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- class: pic .interstitial[] --- name: toc-updating-a-service-through-labels-and-selectors class: title Updating a service through labels and selectors .nav[ [Previous section](#toc-daemon-sets) | [Back to table of contents](#toc-chapter-3) | [Next section](#toc-rolling-updates) ] .debug[(automatically generated title slide)] --- # Updating a service through labels and selectors - What if we want to drop the `rng` deployment from the load balancer? - Option 1: - destroy it - Option 2: - add an extra *label* to the daemon set - update the service *selector* to refer to that *label* -- Of course, option 2 offers more learning opportunities. Right? .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Add an extra label to the daemon set - We will update the daemon set "spec" - Option 1: - edit the `rng.yml` file that we used earlier - load the new definition with `kubectl apply` - Option 2: - use `kubectl edit` -- *If you feel like you got this💕🌈, feel free to try directly.* *We've included a few hints on the next slides for your convenience!* .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## We've put resources in your resources - Reminder: a daemon set is a resource that creates more resources! - There is a difference between: - the label(s) of a resource (in the `metadata` block in the beginning) - the selector of a resource (in the `spec` block) - the label(s) of the resource(s) created by the first resource (in the `template` block) - You need to update the selector and the template (metadata labels are not mandatory) - The template must match the selector (i.e. the resource will refuse to create resources that it will not select) .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Adding our label - Let's add a label `isactive: yes` - In YAML, `yes` should be quoted; i.e. `isactive: "yes"` .exercise[ - Update the daemon set to add `isactive: "yes"` to the selector and template label: ```bash kubectl edit daemonset rng ``` <!-- ```wait Please edit the object below``` ```keys /run: rng``` ```keys ^J``` ```keys noisactive: "yes"``` ```keys ^[``` ] ```keys /run: rng``` ```keys ^J``` ```keys oisactive: "yes"``` ```keys ^[``` ] ```keys :wq``` ```keys ^J``` --> - Update the service to add `isactive: "yes"` to its selector: ```bash kubectl edit service rng ``` <!-- ```wait Please edit the object below``` ```keys /run: rng``` ```keys ^J``` ```keys noisactive: "yes"``` ```keys ^[``` ] ```keys :wq``` ```keys ^J``` --> ] .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Checking what we've done .exercise[ - Check the most recent log line of all `run=rng` pods to confirm that exactly one per node is now active: ```bash kubectl logs -l run=rng --tail 1 ``` ] The timestamps should give us a hint about how many pods are currently receiving traffic. .exercise[ - Look at the pods that we have right now: ```bash kubectl get pods ``` ] .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Cleaning up - The pods of the deployment and the "old" daemon set are still running - We are going to identify them programmatically .exercise[ - List the pods with `run=rng` but without `isactive=yes`: ```bash kubectl get pods -l run=rng,isactive!=yes ``` - Remove these pods: ```bash kubectl delete pods -l run=rng,isactive!=yes ``` ] .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Cleaning up stale pods ``` $ kubectl get pods NAME READY STATUS RESTARTS AGE rng-54f57d4d49-7pt82 1/1 Terminating 0 51m rng-54f57d4d49-vgz9h 1/1 Running 0 22s rng-b85tm 1/1 Terminating 0 39m rng-hfbrr 1/1 Terminating 0 39m rng-vplmj 1/1 Running 0 7m rng-xbpvg 1/1 Running 0 7m [...] ``` - The extra pods (noted `Terminating` above) are going away - ... But a new one (`rng-54f57d4d49-vgz9h` above) was restarted immediately! -- - Remember, the *deployment* still exists, and makes sure that one pod is up and running - If we delete the pod associated to the deployment, it is recreated automatically .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Deleting a deployment .exercise[ - Remove the `rng` deployment: ```bash kubectl delete deployment rng ``` ] -- - The pod that was created by the deployment is now being terminated: ``` $ kubectl get pods NAME READY STATUS RESTARTS AGE rng-54f57d4d49-vgz9h 1/1 Terminating 0 4m rng-vplmj 1/1 Running 0 11m rng-xbpvg 1/1 Running 0 11m [...] ``` Ding, dong, the deployment is dead! And the daemon set lives on. .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Avoiding extra pods - When we changed the definition of the daemon set, it immediately created new pods. We had to remove the old ones manually. - How could we have avoided this? -- - By adding the `isactive: "yes"` label to the pods before changing the daemon set! - This can be done programmatically with `kubectl patch`: ```bash PATCH=' metadata: labels: isactive: "yes" ' kubectl get pods -l run=rng -l controller-revision-hash -o name | xargs kubectl patch -p "$PATCH" ``` .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Labels and debugging - When a pod is misbehaving, we can delete it: another one will be recreated - But we can also change its labels - It will be removed from the load balancer (it won't receive traffic anymore) - Another pod will be recreated immediately - But the problematic pod is still here, and we can inspect and debug it - We can even re-add it to the rotation if necessary (Very useful to troubleshoot intermittent and elusive bugs) .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- ## Labels and advanced rollout control - Conversely, we can add pods matching a service's selector - These pods will then receive requests and serve traffic - Examples: - one-shot pod with all debug flags enabled, to collect logs - pods created automatically, but added to rotation in a second step <br/> (by setting their label accordingly) - This gives us building blocks for canary and blue/green deployments .debug[[k8s/daemonset.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/daemonset.md)] --- class: pic .interstitial[] --- name: toc-rolling-updates class: title Rolling updates .nav[ [Previous section](#toc-updating-a-service-through-labels-and-selectors) | [Back to table of contents](#toc-chapter-3) | [Next section](#toc-accessing-logs-from-the-cli) ] .debug[(automatically generated title slide)] --- # Rolling updates - By default (without rolling updates), when a scaled resource is updated: - new pods are created - old pods are terminated - ... all at the same time - if something goes wrong, ¯\\\_(ツ)\_/¯ .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- ## Rolling updates - With rolling updates, when a resource is updated, it happens progressively - Two parameters determine the pace of the rollout: `maxUnavailable` and `maxSurge` - They can be specified in absolute number of pods, or percentage of the `replicas` count - At any given time ... - there will always be at least `replicas`-`maxUnavailable` pods available - there will never be more than `replicas`+`maxSurge` pods in total - there will therefore be up to `maxUnavailable`+`maxSurge` pods being updated - We have the possibility to rollback to the previous version <br/>(if the update fails or is unsatisfactory in any way) .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- ## Checking current rollout parameters - Recall how we build custom reports with `kubectl` and `jq`: .exercise[ - Show the rollout plan for our deployments: ```bash kubectl get deploy -o json | jq ".items[] | {name:.metadata.name} + .spec.strategy.rollingUpdate" ``` ] .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- ## Rolling updates in practice - As of Kubernetes 1.8, we can do rolling updates with: `deployments`, `daemonsets`, `statefulsets` - Editing one of these resources will automatically result in a rolling update - Rolling updates can be monitored with the `kubectl rollout` subcommand .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- ## Building a new version of the `worker` service .exercise[ - Go to the `stack` directory: ```bash cd ~/container.training/stacks ``` - Edit `dockercoins/worker/worker.py`; update the first `sleep` line to sleep 1 second - Build a new tag and push it to the registry: ```bash #export REGISTRY=localhost:3xxxx export TAG=v0.2 docker-compose -f dockercoins.yml build docker-compose -f dockercoins.yml push ``` ] .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- ## Rolling out the new `worker` service .exercise[ - Let's monitor what's going on by opening a few terminals, and run: ```bash kubectl get pods -w kubectl get replicasets -w kubectl get deployments -w ``` <!-- ```wait NAME``` ```keys ^C``` --> - Update `worker` either with `kubectl edit`, or by running: ```bash kubectl set image deploy worker worker=$REGISTRY/worker:$TAG ``` ] -- That rollout should be pretty quick. What shows in the web UI? .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- ## Give it some time - At first, it looks like nothing is happening (the graph remains at the same level) - According to `kubectl get deploy -w`, the `deployment` was updated really quickly - But `kubectl get pods -w` tells a different story - The old `pods` are still here, and they stay in `Terminating` state for a while - Eventually, they are terminated; and then the graph decreases significantly - This delay is due to the fact that our worker doesn't handle signals - Kubernetes sends a "polite" shutdown request to the worker, which ignores it - After a grace period, Kubernetes gets impatient and kills the container (The grace period is 30 seconds, but [can be changed](https://kubernetes.io/docs/concepts/workloads/pods/pod/#termination-of-pods) if needed) .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- ## Rolling out something invalid - What happens if we make a mistake? .exercise[ - Update `worker` by specifying a non-existent image: ```bash export TAG=v0.3 kubectl set image deploy worker worker=$REGISTRY/worker:$TAG ``` - Check what's going on: ```bash kubectl rollout status deploy worker ``` <!-- ```wait Waiting for deployment``` ```keys ^C``` --> ] -- Our rollout is stuck. However, the app is not dead. (After a minute, it will stabilize to be 20-25% slower.) .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- ## What's going on with our rollout? - Why is our app a bit slower? - Because `MaxUnavailable=25%` ... So the rollout terminated 2 replicas out of 10 available - Okay, but why do we see 5 new replicas being rolled out? - Because `MaxSurge=25%` ... So in addition to replacing 2 replicas, the rollout is also starting 3 more - It rounded down the number of MaxUnavailable pods conservatively, <br/> but the total number of pods being rolled out is allowed to be 25+25=50% .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- class: extra-details ## The nitty-gritty details - We start with 10 pods running for the `worker` deployment - Current settings: MaxUnavailable=25% and MaxSurge=25% - When we start the rollout: - two replicas are taken down (as per MaxUnavailable=25%) - two others are created (with the new version) to replace them - three others are created (with the new version) per MaxSurge=25%) - Now we have 8 replicas up and running, and 5 being deployed - Our rollout is stuck at this point! .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- ## Checking the dashboard during the bad rollout If you haven't deployed the Kubernetes dashboard earlier, just skip this slide. .exercise[ - Check which port the dashboard is on: ```bash kubectl -n kube-system get svc socat ``` ] Note the `3xxxx` port. .exercise[ - Connect to http://oneofournodes:3xxxx/ <!-- ```open https://node1:3xxxx/``` --> ] -- - We have failures in Deployments, Pods, and Replica Sets .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- ## Recovering from a bad rollout - We could push some `v0.3` image (the pod retry logic will eventually catch it and the rollout will proceed) - Or we could invoke a manual rollback .exercise[ <!-- ```keys ^C ``` --> - Cancel the deployment and wait for the dust to settle down: ```bash kubectl rollout undo deploy worker kubectl rollout status deploy worker ``` ] .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- ## Changing rollout parameters - We want to: - revert to `v0.1` - be conservative on availability (always have desired number of available workers) - go slow on rollout speed (update only one pod at a time) - give some time to our workers to "warm up" before starting more The corresponding changes can be expressed in the following YAML snippet: .small[ ```yaml spec: template: spec: containers: - name: worker image: $REGISTRY/worker:v0.1 strategy: rollingUpdate: maxUnavailable: 0 maxSurge: 1 minReadySeconds: 10 ``` ] .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- ## Applying changes through a YAML patch - We could use `kubectl edit deployment worker` - But we could also use `kubectl patch` with the exact YAML shown before .exercise[ .small[ - Apply all our changes and wait for them to take effect: ```bash kubectl patch deployment worker -p " spec: template: spec: containers: - name: worker image: $REGISTRY/worker:v0.1 strategy: rollingUpdate: maxUnavailable: 0 maxSurge: 1 minReadySeconds: 10 " kubectl rollout status deployment worker kubectl get deploy -o json worker | jq "{name:.metadata.name} + .spec.strategy.rollingUpdate" ``` ] ] .debug[[k8s/rollout.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/rollout.md)] --- class: pic .interstitial[] --- name: toc-accessing-logs-from-the-cli class: title Accessing logs from the CLI .nav[ [Previous section](#toc-rolling-updates) | [Back to table of contents](#toc-chapter-4) | [Next section](#toc-managing-stacks-with-helm) ] .debug[(automatically generated title slide)] --- # Accessing logs from the CLI - The `kubectl logs` commands has limitations: - it cannot stream logs from multiple pods at a time - when showing logs from multiple pods, it mixes them all together - We are going to see how to do it better .debug[[k8s/logs-cli.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/logs-cli.md)] --- ## Doing it manually - We *could* (if we were so inclined), write a program or script that would: - take a selector as an argument - enumerate all pods matching that selector (with `kubectl get -l ...`) - fork one `kubectl logs --follow ...` command per container - annotate the logs (the output of each `kubectl logs ...` process) with their origin - preserve ordering by using `kubectl logs --timestamps ...` and merge the output -- - We *could* do it, but thankfully, others did it for us already! .debug[[k8s/logs-cli.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/logs-cli.md)] --- ## Stern [Stern](https://github.com/wercker/stern) is an open source project by [Wercker](http://www.wercker.com/). From the README: *Stern allows you to tail multiple pods on Kubernetes and multiple containers within the pod. Each result is color coded for quicker debugging.* *The query is a regular expression so the pod name can easily be filtered and you don't need to specify the exact id (for instance omitting the deployment id). If a pod is deleted it gets removed from tail and if a new pod is added it automatically gets tailed.* Exactly what we need! .debug[[k8s/logs-cli.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/logs-cli.md)] --- ## Installing Stern - Run `stern` (without arguments) to check if it's installed: ``` $ stern Tail multiple pods and containers from Kubernetes Usage: stern pod-query [flags] ``` - If it is not installed, the easiest method is to download a [binary release](https://github.com/wercker/stern/releases) - The following commands will install Stern on a Linux Intel 64 bit machine: ```bash sudo curl -L -o /usr/local/bin/stern \ https://github.com/wercker/stern/releases/download/1.8.0/stern_linux_amd64 sudo chmod +x /usr/local/bin/stern ``` .debug[[k8s/logs-cli.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/logs-cli.md)] --- ## Using Stern - There are two ways to specify the pods for which we want to see the logs: - `-l` followed by a selector expression (like with many `kubectl` commands) - with a "pod query", i.e. a regex used to match pod names - These two ways can be combined if necessary .exercise[ - View the logs for all the rng containers: ```bash stern rng ``` <!-- ```wait HTTP/1.1``` ```keys ^C``` --> ] .debug[[k8s/logs-cli.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/logs-cli.md)] --- ## Stern convenient options - The `--tail N` flag shows the last `N` lines for each container (Instead of showing the logs since the creation of the container) - The `-t` / `--timestamps` flag shows timestamps - The `--all-namespaces` flag is self-explanatory .exercise[ - View what's up with the `weave` system containers: ```bash stern --tail 1 --timestamps --all-namespaces weave ``` <!-- ```wait weave-npc``` ```keys ^C``` --> ] .debug[[k8s/logs-cli.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/logs-cli.md)] --- ## Using Stern with a selector - When specifying a selector, we can omit the value for a label - This will match all objects having that label (regardless of the value) - Everything created with `kubectl run` has a label `run` - We can use that property to view the logs of all the pods created with `kubectl run` .exercise[ - View the logs for all the things started with `kubectl run`: ```bash stern -l run ``` <!-- ```wait units of work``` ```keys ^C``` --> ] .debug[[k8s/logs-cli.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/logs-cli.md)] --- class: pic .interstitial[] --- name: toc-managing-stacks-with-helm class: title Managing stacks with Helm .nav[ [Previous section](#toc-accessing-logs-from-the-cli) | [Back to table of contents](#toc-chapter-4) | [Next section](#toc-namespaces) ] .debug[(automatically generated title slide)] --- # Managing stacks with Helm - We created our first resources with `kubectl run`, `kubectl expose` ... - We have also created resources by loading YAML files with `kubectl apply -f` - For larger stacks, managing thousands of lines of YAML is unreasonable - These YAML bundles need to be customized with variable parameters (E.g.: number of replicas, image version to use ...) - It would be nice to have an organized, versioned collection of bundles - It would be nice to be able to upgrade/rollback these bundles carefully - [Helm](https://helm.sh/) is an open source project offering all these things! .debug[[k8s/helm.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/helm.md)] --- ## Helm concepts - `helm` is a CLI tool - `tiller` is its companion server-side component - A "chart" is an archive containing templatized YAML bundles - Charts are versioned - Charts can be stored on private or public repositories .debug[[k8s/helm.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/helm.md)] --- ## Installing Helm - If the `helm` CLI is not installed in your environment, install it .exercise[ - Check if `helm` is installed: ```bash helm ``` - If it's not installed, run the following command: ```bash curl https://raw.githubusercontent.com/kubernetes/helm/master/scripts/get | bash ``` ] .debug[[k8s/helm.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/helm.md)] --- ## Installing Tiller - Tiller is composed of a *service* and a *deployment* in the `kube-system` namespace - They can be managed (installed, upgraded...) with the `helm` CLI .exercise[ - Deploy Tiller: ```bash helm init ``` ] If Tiller was already installed, don't worry: this won't break it. At the end of the install process, you will see: ``` Happy Helming! ``` .debug[[k8s/helm.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/helm.md)] --- ## Fix account permissions - Helm permission model requires us to tweak permissions - In a more realistic deployment, you might create per-user or per-team service accounts, roles, and role bindings .exercise[ - Grant `cluster-admin` role to `kube-system:default` service account: ```bash kubectl create clusterrolebinding add-on-cluster-admin \ --clusterrole=cluster-admin --serviceaccount=kube-system:default ``` ] (Defining the exact roles and permissions on your cluster requires a deeper knowledge of Kubernetes' RBAC model. The command above is fine for personal and development clusters.) .debug[[k8s/helm.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/helm.md)] --- ## View available charts - A public repo is pre-configured when installing Helm - We can view available charts with `helm search` (and an optional keyword) .exercise[ - View all available charts: ```bash helm search ``` - View charts related to `prometheus`: ```bash helm search prometheus ``` ] .debug[[k8s/helm.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/helm.md)] --- ## Install a chart - Most charts use `LoadBalancer` service types by default - Most charts require persistent volumes to store data - We need to relax these requirements a bit .exercise[ - Install the Prometheus metrics collector on our cluster: ```bash helm install stable/prometheus \ --set server.service.type=NodePort \ --set server.persistentVolume.enabled=false ``` ] Where do these `--set` options come from? .debug[[k8s/helm.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/helm.md)] --- ## Inspecting a chart - `helm inspect` shows details about a chart (including available options) .exercise[ - See the metadata and all available options for `stable/prometheus`: ```bash helm inspect stable/prometheus ``` ] The chart's metadata includes an URL to the project's home page. (Sometimes it conveniently points to the documentation for the chart.) .debug[[k8s/helm.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/helm.md)] --- ## Creating a chart - We are going to show a way to create a *very simplified* chart - In a real chart, *lots of things* would be templatized (Resource names, service types, number of replicas...) .exercise[ - Create a sample chart: ```bash helm create dockercoins ``` - Move away the sample templates and create an empty template directory: ```bash mv dockercoins/templates dockercoins/default-templates mkdir dockercoins/templates ``` ] .debug[[k8s/helm.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/helm.md)] --- ## Exporting the YAML for our application - The following section assumes that DockerCoins is currently running .exercise[ - Create one YAML file for each resource that we need: .small[ ```bash while read kind name; do kubectl get -o yaml --export $kind $name > dockercoins/templates/$name-$kind.yaml done <<EOF deployment worker deployment hasher daemonset rng deployment webui deployment redis service hasher service rng service webui service redis EOF ``` ] ] .debug[[k8s/helm.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/helm.md)] --- ## Testing our helm chart .exercise[ - Let's install our helm chart! (`dockercoins` is the path to the chart) ``` helm install dockercoins ``` ] -- - Since the application is already deployed, this will fail:<br> `Error: release loitering-otter failed: services "hasher" already exists` - To avoid naming conflicts, we will deploy the application in another *namespace* .debug[[k8s/helm.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/helm.md)] --- class: pic .interstitial[] --- name: toc-namespaces class: title Namespaces .nav[ [Previous section](#toc-managing-stacks-with-helm) | [Back to table of contents](#toc-chapter-4) | [Next section](#toc-network-policies) ] .debug[(automatically generated title slide)] --- # Namespaces - We cannot have two resources with the same name (Or can we...?) -- - We cannot have two resources *of the same type* with the same name (But it's OK to have a `rng` service, a `rng` deployment, and a `rng` daemon set!) -- - We cannot have two resources of the same type with the same name *in the same namespace* (But it's OK to have e.g. two `rng` services in different namespaces!) -- - In other words: **the tuple *(type, name, namespace)* needs to be unique** (In the resource YAML, the type is called `Kind`) .debug[[k8s/namespaces.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/namespaces.md)] --- ## Pre-existing namespaces - If we deploy a cluster with `kubeadm`, we have three namespaces: - `default` (for our applications) - `kube-system` (for the control plane) - `kube-public` (contains one secret used for cluster discovery) - If we deploy differently, we may have different namespaces .debug[[k8s/namespaces.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/namespaces.md)] --- ## Creating namespaces - Creating a namespace is done with the `kubectl create namespace` command: ```bash kubectl create namespace blue ``` - We can also get fancy and use a very minimal YAML snippet, e.g.: ```bash kubectl apply -f- <<EOF apiVersion: v1 kind: Namespace metadata: name: blue EOF ``` - The two methods above are identical - If we are using a tool like Helm, it will create namespaces automatically .debug[[k8s/namespaces.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/namespaces.md)] --- ## Using namespaces - We can pass a `-n` or `--namespace` flag to most `kubectl` commands: ```bash kubectl -n blue get svc ``` - We can also use *contexts* - A context is a *(user, cluster, namespace)* tuple - We can manipulate contexts with the `kubectl config` command .debug[[k8s/namespaces.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/namespaces.md)] --- ## Creating a context - We are going to create a context for the `blue` namespace .exercise[ - View existing contexts to see the cluster name and the current user: ```bash kubectl config get-contexts ``` - Create a new context: ```bash kubectl config set-context blue --namespace=blue \ --cluster=kubernetes --user=kubernetes-admin ``` ] We have created a context; but this is just some configuration values. The namespace doesn't exist yet. .debug[[k8s/namespaces.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/namespaces.md)] --- ## Using a context - Let's switch to our new context and deploy the DockerCoins chart .exercise[ - Use the `blue` context: ```bash kubectl config use-context blue ``` - Deploy DockerCoins: ```bash helm install dockercoins ``` ] In the last command line, `dockercoins` is just the local path where we created our Helm chart before. .debug[[k8s/namespaces.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/namespaces.md)] --- ## Viewing the deployed app - Let's see if our Helm chart worked correctly! .exercise[ - Retrieve the port number allocated to the `webui` service: ```bash kubectl get svc webui ``` - Point our browser to http://X.X.X.X:3xxxx ] Note: it might take a minute or two for the app to be up and running. .debug[[k8s/namespaces.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/namespaces.md)] --- ## Namespaces and isolation - Namespaces *do not* provide isolation - A pod in the `green` namespace can communicate with a pod in the `blue` namespace - A pod in the `default` namespace can communicate with a pod in the `kube-system` namespace - CoreDNS uses a different subdomain for each namespace - Example: from any pod in the cluster, you can connect to the Kubernetes API with: `https://kubernetes.default.svc.cluster.local:443/` .debug[[k8s/namespaces.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/namespaces.md)] --- ## Isolating pods - Actual isolation is implemented with *network policies* - Network policies are resources (like deployments, services, namespaces...) - Network policies specify which flows are allowed: - between pods - from pods to the outside world - and vice-versa .debug[[k8s/namespaces.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/namespaces.md)] --- ## Switch back to the default namespace - Let's make sure that we don't run future exercises in the `blue` namespace .exercise[ - View the names of the contexts: ```bash kubectl config get-contexts ``` - Switch back to the original context: ```bash kubectl config use-context kubernetes-admin@kubernetes ``` ] .debug[[k8s/namespaces.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/namespaces.md)] --- ## Switching namespaces more easily - Defining a new context for each namespace can be cumbersome - We can also alter the current context with this one-liner: ```bash kubectl config set-context --current --namespace=foo ``` - We can also use a little helper tool called `kubens`: ```bash # Switch to namespace foo kubens foo # Switch back to the previous namespace kubens - ``` .debug[[k8s/namespaces.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/namespaces.md)] --- ## `kubens` and `kubectx` - With `kubens`, we can switch quickly between namespaces - With `kubectx`, we can switch quickly between contexts - Both tools are simple shell scripts available from https://github.com/ahmetb/kubectx - On our clusters, they are installed as `kns` and `kctx` (for brevity and to avoid completion clashes between `kubectx` and `kubectl`) .debug[[k8s/namespaces.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/namespaces.md)] --- ## `kube-ps1` - It's easy to lose track of our current cluster / context / namespace - `kube-ps1` makes it easy to track these, by showing them in our shell prompt - It's a simple shell script available from https://github.com/jonmosco/kube-ps1 - On our clusters, `kube-ps1` is installed and included in `PS1`: ``` [123.45.67.89] `(kubernetes-admin@kubernetes:default)` docker@node1 ~ ``` (The highlighted part is `context:namespace`, managed by `kube-ps1`) - Highly recommended if you work across multiple contexts or namespaces! .debug[[k8s/namespaces.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/namespaces.md)] --- class: pic .interstitial[] --- name: toc-network-policies class: title Network policies .nav[ [Previous section](#toc-namespaces) | [Back to table of contents](#toc-chapter-4) | [Next section](#toc-links-and-resources) ] .debug[(automatically generated title slide)] --- # Network policies - Namespaces help us to *organize* resources - Namespaces do not provide isolation - By default, every pod can contact every other pod - By default, every service accepts traffic from anyone - If we want this to be different, we need *network policies* .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## What's a network policy? A network policy is defined by the following things. - A *pod selector* indicating which pods it applies to e.g.: "all pods in namespace `blue` with the label `zone=internal`" - A list of *ingress rules* indicating which inbound traffic is allowed e.g.: "TCP connections to ports 8000 and 8080 coming from pods with label `zone=dmz`, and from the external subnet 4.42.6.0/24, except 4.42.6.5" - A list of *egress rules* indicating which outbound traffic is allowed A network policy can provide ingress rules, egress rules, or both. .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## How do network policies apply? - A pod can be "selected" by any number of network policies - If a pod isn't selected by any network policy, then its traffic is unrestricted (In other words: in the absence of network policies, all traffic is allowed) - If a pod is selected by at least one network policy, then all traffic is blocked ... ... unless it is explicitly allowed by one of these network policies .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- class: extra-details ## Traffic filtering is flow-oriented - Network policies deal with *connections*, not individual packets - Example: to allow HTTP (80/tcp) connections to pod A, you only need an ingress rule (You do not need a matching egress rule to allow response traffic to go through) - This also applies for UDP traffic (Allowing DNS traffic can be done with a single rule) - Network policy implementations use stateful connection tracking .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Pod-to-pod traffic - Connections from pod A to pod B have to be allowed by both pods: - pod A has to be unrestricted, or allow the connection as an *egress* rule - pod B has to be unrestricted, or allow the connection as an *ingress* rule - As a consequence: if a network policy restricts traffic going from/to a pod, <br/> the restriction cannot be overridden by a network policy selecting another pod - This prevents an entity managing network policies in namespace A (but without permission to do so in namespace B) from adding network policies giving them access to namespace B .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## The rationale for network policies - In network security, it is generally considered better to "deny all, then allow selectively" (The other approach, "allow all, then block selectively" makes it too easy to leave holes) - As soon as one network policy selects a pod, the pod enters this "deny all" logic - Further network policies can open additional access - Good network policies should be scoped as precisely as possible - In particular: make sure that the selector is not too broad (Otherwise, you end up affecting pods that were otherwise well secured) .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Our first network policy This is our game plan: - run a web server in a pod - create a network policy to block all access to the web server - create another network policy to allow access only from specific pods .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Running our test web server .exercise[ - Let's use the `nginx` image: ```bash kubectl run testweb --image=nginx ``` - Find out the IP address of the pod with one of these two commands: ```bash kubectl get pods -o wide -l run=testweb IP=$(kubectl get pods -l run=testweb -o json | jq -r .items[0].status.podIP) ``` - Check that we can connect to the server: ```bash curl $IP ``` ] The `curl` command should show us the "Welcome to nginx!" page. .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Adding a very restrictive network policy - The policy will select pods with the label `run=testweb` - It will specify an empty list of ingress rules (matching nothing) .exercise[ - Apply the policy in this YAML file: ```bash kubectl apply -f ~/container.training/k8s/netpol-deny-all-for-testweb.yaml ``` - Check if we can still access the server: ```bash curl $IP ``` ] The `curl` command should now time out. .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Looking at the network policy This is the file that we applied: ```yaml kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: deny-all-for-testweb spec: podSelector: matchLabels: run: testweb ingress: [] ``` .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Allowing connections only from specific pods - We want to allow traffic from pods with the label `run=testcurl` - Reminder: this label is automatically applied when we do `kubectl run testcurl ...` .exercise[ - Apply another policy: ```bash kubectl apply -f ~/container.training/k8s/netpol-allow-testcurl-for-testweb.yaml ``` ] .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Looking at the network policy This is the second file that we applied: ```yaml kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: allow-testcurl-for-testweb spec: podSelector: matchLabels: run: testweb ingress: - from: - podSelector: matchLabels: run: testcurl ``` .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Testing the network policy - Let's create pods with, and without, the required label .exercise[ - Try to connect to testweb from a pod with the `run=testcurl` label: ```bash kubectl run testcurl --rm -i --image=centos -- curl -m3 $IP ``` - Try to connect to testweb with a different label: ```bash kubectl run testkurl --rm -i --image=centos -- curl -m3 $IP ``` ] The first command will work (and show the "Welcome to nginx!" page). The second command will fail and time out after 3 seconds. (The timeout is obtained with the `-m3` option.) .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## An important warning - Some network plugins only have partial support for network policies - For instance, Weave [doesn't support ipBlock (yet)](https://github.com/weaveworks/weave/issues/3168) - Weave added support for egress rules [in version 2.4](https://github.com/weaveworks/weave/pull/3313) (released in July 2018) - Unsupported features might be silently ignored (Making you believe that you are secure, when you're not) .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Network policies, pods, and services - Network policies apply to *pods* - A *service* can select multiple pods (And load balance traffic across them) - It is possible that we can connect to some pods, but not some others (Because of how network policies have been defined for these pods) - In that case, connections to the service will randomly pass or fail (Depending on whether the connection was sent to a pod that we have access to or not) .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Network policies and namespaces - A good strategy is to isolate a namespace, so that: - all the pods in the namespace can communicate together - other namespaces cannot access the pods - external access has to be enabled explicitly - Let's see what this would look like for the DockerCoins app! .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Network policies for DockerCoins - We are going to apply two policies - The first policy will prevent traffic from other namespaces - The second policy will allow traffic to the `webui` pods - That's all we need for that app! .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Blocking traffic from other namespaces This policy selects all pods in the current namespace. It allows traffic only from pods in the current namespace. (An empty `podSelector` means "all pods".) ```yaml kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: deny-from-other-namespaces spec: podSelector: {} ingress: - from: - podSelector: {} ``` .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Allowing traffic to `webui` pods This policy selects all pods with label `run=webui`. It allows traffic from any source. (An empty `from` fields means "all sources".) ```yaml kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: allow-webui spec: podSelector: matchLabels: run: webui ingress: - from: [] ``` .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Applying both network policies - Both network policies are declared in the file `k8s/netpol-dockercoins.yaml` .exercise[ - Apply the network policies: ```bash kubectl apply -f ~/container.training/k8s/netpol-dockercoins.yaml ``` - Check that we can still access the web UI from outside <br/> (and that the app is still working correctly!) - Check that we can't connect anymore to `rng` or `hasher` through their ClusterIP ] Note: using `kubectl proxy` or `kubectl port-forward` allows us to connect regardless of existing network policies. This allows us to debug and troubleshoot easily, without having to poke holes in our firewall. .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Cleaning up our network policies - The network policies that we have installed block all traffic to the default namespace - We should remove them, otherwise further exercises will fail! .exercise[ - Remove all network policies: ```bash kubectl delete networkpolicies --all ``` ] .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Protecting the control plane - Should we add network policies to block unauthorized access to the control plane? (etcd, API server, etc.) -- - At first, it seems like a good idea ... -- - But it *shouldn't* be necessary: - not all network plugins support network policies - the control plane is secured by other methods (mutual TLS, mostly) - the code running in our pods can reasonably expect to contact the API <br/> (and it can do so safely thanks to the API permission model) - If we block access to the control plane, we might disrupt legitimate code - ... Without necessarily improving security .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Further resources - As always, the [Kubernetes documentation](https://kubernetes.io/docs/concepts/services-networking/network-policies/) is a good starting point - The API documentation has a lot of detail about the format of various objects: - [NetworkPolicy](https://kubernetes.io/docs/reference/generated/kubernetes-api/v1.12/#networkpolicy-v1-networking-k8s-io) - [NetworkPolicySpec](https://kubernetes.io/docs/reference/generated/kubernetes-api/v1.12/#networkpolicyspec-v1-networking-k8s-io) - [NetworkPolicyIngressRule](https://kubernetes.io/docs/reference/generated/kubernetes-api/v1.12/#networkpolicyingressrule-v1-networking-k8s-io) - etc. - And two resources by [Ahmet Alp Balkan](https://ahmet.im/): - a [very good talk about network policies](https://www.youtube.com/watch?list=PLj6h78yzYM2P-3-xqvmWaZbbI1sW-ulZb&v=3gGpMmYeEO8) at KubeCon North America 2017 - a repository of [ready-to-use recipes](https://github.com/ahmetb/kubernetes-network-policy-recipes) for network policies .debug[[k8s/netpol.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/netpol.md)] --- ## Options for our first production cluster - Get a managed cluster from a major cloud provider (AKS, EKS, GKE...) (price: $, difficulty: medium) - Hire someone to deploy it for us (price: $$, difficulty: easy) - Do it ourselves (price: $-$$$, difficulty: hard) .debug[[k8s/whatsnext.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/whatsnext.md)] --- ## One big cluster vs. multiple small ones - Yes, it is possible to have prod+dev in a single cluster (and implement good isolation and security with RBAC, network policies...) - But it is not a good idea to do that for our first deployment - Start with a production cluster + at least a test cluster - Implement and check RBAC and isolation on the test cluster (e.g. deploy multiple test versions side-by-side) - Make sure that all our devs have usable dev clusters (whether it's a local minikube or a full-blown multi-node cluster) .debug[[k8s/whatsnext.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/whatsnext.md)] --- ## Stateful services (databases etc.) - As a first step, it is wiser to keep stateful services *outside* of the cluster - Exposing them to pods can be done with multiple solutions: - `ExternalName` services <br/> (`redis.blue.svc.cluster.local` will be a `CNAME` record) - `ClusterIP` services with explicit `Endpoints` <br/> (instead of letting Kubernetes generate the endpoints from a selector) - Ambassador services <br/> (application-level proxies that can provide credentials injection and more) .debug[[k8s/whatsnext.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/whatsnext.md)] --- ## Stateful services (second take) - If we want to host stateful services on Kubernetes, we can use: - a storage provider - persistent volumes, persistent volume claims - stateful sets - Good questions to ask: - what's the *operational cost* of running this service ourselves? - what do we gain by deploying this stateful service on Kubernetes? .debug[[k8s/whatsnext.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/whatsnext.md)] --- ## HTTP traffic handling - *Services* are layer 4 constructs - HTTP is a layer 7 protocol - It is handled by *ingresses* (a different resource kind) - *Ingresses* allow: - virtual host routing - session stickiness - URI mapping - and much more! - Check out e.g. [Træfik](https://docs.traefik.io/user-guide/kubernetes/) .debug[[k8s/whatsnext.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/whatsnext.md)] --- ## Logging - Logging is delegated to the container engine - Logs are exposed through the API - Logs are also accessible through local files (`/var/log/containers`) - Log shipping to a central platform is usually done through these files (e.g. with an agent bind-mounting the log directory) .debug[[k8s/whatsnext.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/whatsnext.md)] --- ## Metrics - The kubelet embeds [cAdvisor](https://github.com/google/cadvisor), which exposes container metrics (cAdvisor might be separated in the future for more flexibility) - It is a good idea to start with [Prometheus](https://prometheus.io/) (even if you end up using something else) - Starting from Kubernetes 1.8, we can use the [Metrics API](https://kubernetes.io/docs/tasks/debug-application-cluster/core-metrics-pipeline/) - [Heapster](https://github.com/kubernetes/heapster) was a popular add-on (but is being [deprecated](https://github.com/kubernetes/heapster/blob/master/docs/deprecation.md) starting with Kubernetes 1.11) .debug[[k8s/whatsnext.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/whatsnext.md)] --- ## Managing the configuration of our applications - Two constructs are particularly useful: secrets and config maps - They allow to expose arbitrary information to our containers - **Avoid** storing configuration in container images (There are some exceptions to that rule, but it's generally a Bad Idea) - **Never** store sensitive information in container images (It's the container equivalent of the password on a post-it note on your screen) .debug[[k8s/whatsnext.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/whatsnext.md)] --- ## Managing stack deployments - The best deployment tool will vary, depending on: - the size and complexity of your stack(s) - how often you change it (i.e. add/remove components) - the size and skills of your team - A few examples: - shell scripts invoking `kubectl` - YAML resources descriptions committed to a repo - [Helm](https://github.com/kubernetes/helm) (~package manager) - [Spinnaker](https://www.spinnaker.io/) (Netflix' CD platform) - [Brigade](https://brigade.sh/) (event-driven scripting; no YAML) .debug[[k8s/whatsnext.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/whatsnext.md)] --- ## Cluster federation --  -- Sorry Star Trek fans, this is not the federation you're looking for! -- (If I add "Your cluster is in another federation" I might get a 3rd fandom wincing!) .debug[[k8s/whatsnext.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/whatsnext.md)] --- ## Cluster federation - Kubernetes master operation relies on etcd - etcd uses the [Raft](https://raft.github.io/) protocol - Raft recommends low latency between nodes - What if our cluster spreads to multiple regions? -- - Break it down in local clusters - Regroup them in a *cluster federation* - Synchronize resources across clusters - Discover resources across clusters .debug[[k8s/whatsnext.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/whatsnext.md)] --- class: pic .interstitial[] --- name: toc-links-and-resources class: title Links and resources .nav[ [Previous section](#toc-network-policies) | [Back to table of contents](#toc-chapter-4) | [Next section](#toc-thank-you) ] .debug[(automatically generated title slide)] --- # Links and resources - [Microsoft Learn](https://docs.microsoft.com/learn/) - [Azure Kubernetes Service](https://docs.microsoft.com/azure/aks/) - [Cloud Developer Advocates](https://developer.microsoft.com/advocates/) - [Kubernetes Community](https://kubernetes.io/community/) - Slack, Google Groups, meetups - [Local meetups](https://www.meetup.com/) - [devopsdays](https://www.devopsdays.org/) .footnote[These slides (and future updates) are on → http://container.training/] .debug[[k8s/links-bridget.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/k8s/links-bridget.md)] --- class: title, self-paced Thank you! .debug[[shared/thankyou.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/thankyou.md)] --- class: pic .interstitial[] --- name: toc-thank-you class: title Thank you! .nav[ [Previous section](#toc-links-and-resources) | [Back to table of contents](#toc-chapter-4) | [Next section](#toc-) ] .debug[(automatically generated title slide)] --- # Thank you! - The clusters will be shut down tomorrow morning - If you like: - [rate this tutorial on the Velocity website](https://conferences.oreilly.com/velocity/vl-ny/public/schedule/evaluate/70102) - [tweet about it](https://twitter.com/intent/tweet?url=https%3A%2F%2Fcontainer.training&text=Learning%20k8s%20with%20@bridgetkromhout%21&hashtags=VelocityConf), mentioning @bridgetkromhout and #VelocityConf - [questions, comments, pull requests, workshop invitations, etc](https://github.com/jpetazzo/container.training/) .debug[[shared/thankyou.md](https://github.com/jpetazzo/container.training/tree/velny-k8s101-2018/slides/shared/thankyou.md)]